| You are here: | ||

| <<< Previous | Home | Next >>> |

This chapter discusses the concepts surrounding architecture artifacts and then describes the artifacts that are recommended to be created for each phase within the Architecture Development Method (ADM). It also presents guidance for developing a set of views, some or all of which may be appropriate in a particular architecture development.

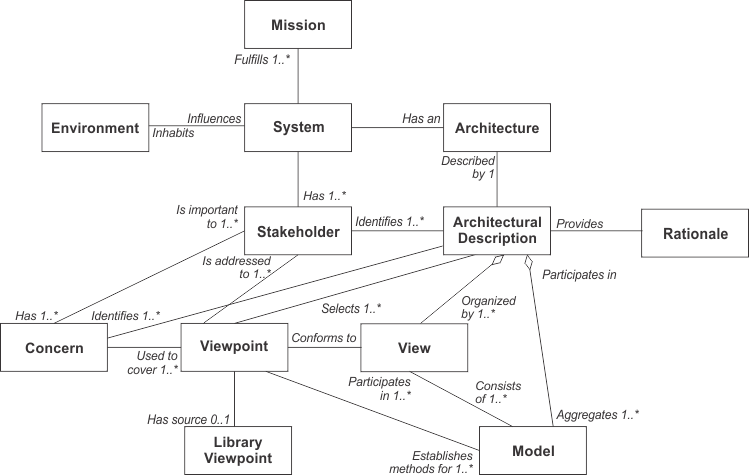

Architectural artifacts are created in order to describe a system, solution, or state of the enterprise. The concepts discussed in this section have been adapted from more formal definitions contained in ISO/IEC 42010:2007 and illustrated in Figure 35-1.

A "system" is a collection of components organized to accomplish a specific function or set of functions.

The "architecture" of a system is the system's fundamental organization, embodied in its components, their relationships to each other and to the environment, and the principles guiding its design and evolution.

An "architecture description" is a collection of artifacts that document an architecture. In TOGAF, architecture views are the key artifacts in an architecture description.

"Stakeholders" are people who have key roles in, or concerns about, the system; for example, as users, developers, or managers. Different stakeholders with different roles in the system will have different concerns. Stakeholders can be individuals, teams, or organizations (or classes thereof).

"Concerns" are the key interests that are crucially important to the stakeholders in the system, and determine the acceptability of the system. Concerns may pertain to any aspect of the system's functioning, development, or operation, including considerations such as performance, reliability, security, distribution, and evolvability.

A "view" is a representation of a whole system from the perspective of a related set of concerns.

In capturing or representing the design of a system architecture, the architect will typically create one or more architecture models, possibly using different tools. A view will comprise selected parts of one or more models, chosen so as to demonstrate to a particular stakeholder or group of stakeholders that their concerns are being adequately addressed in the design of the system architecture.

A "viewpoint" defines the perspective from which a view is taken. More specifically, a viewpoint defines: how to construct and use a view (by means of an appropriate schema or template); the information that should appear in the view; the modeling techniques for expressing and analyzing the information; and a rationale for these choices (e.g., by describing the purpose and intended audience of the view).

In summary, then, architecture views are representations of the overall architecture in terms meaningful to stakeholders. They enable the architecture to be communicated to and understood by the stakeholders, so they can verify that the system will address their concerns.

Concerns are the root of the process of decomposition into requirements. Concerns are represented in the architecture by these requirements. Requirements should be SMART (e.g., specific metrics).

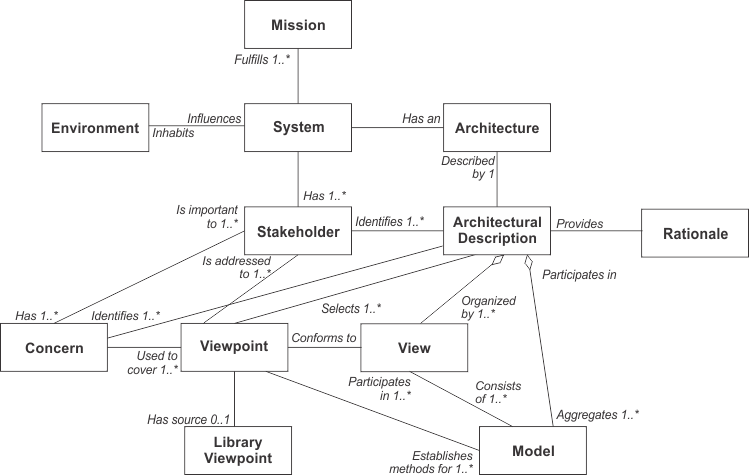

For many architectures, a useful viewpoint is that of business domains, which can be illustrated by an example from The Open Group itself.

The viewpoint is specified as follows:

|

Viewpoint Element |

Description |

|---|---|

|

Stakeholders |

Management Board, Chief Executive Officer |

|

Concerns |

Show the top-level relationships between geographical sites and business functions. |

|

Modeling technique |

Nested boxes diagram. |

The corresponding view of The Open Group (in 2008) is shown in Figure 35-2.

The choice of which particular architecture views to develop is one of the key decisions that the architect has to make.

The architect has a responsibility for ensuring the completeness (fitness-for-purpose) of the architecture, in terms of adequately addressing all the pertinent concerns of its stakeholders; and the integrity of the architecture, in terms of connecting all the various views to each other, satisfactorily reconciling the conflicting concerns of different stakeholders, and showing the trade-offs made in so doing (as between security and performance, for example).

The choice has to be constrained by considerations of practicality, and by the principle of fitness-for-purpose (i.e., the architecture should be developed only to the point at which it is fit-for-purpose, and not reiterated ad infinitum as an academic exercise).

As explained in Part II: Architecture Development Method (ADM), the development of architecture views is an iterative process. The typical progression is from business to technology, using a technique such as business scenarios (see Part III, 26. Business Scenarios and Business Goals) to properly identify all pertinent concerns; and from high-level overview to lower-level detail, continually referring back to the concerns and requirements of the stakeholders throughout the process.

Moreover, each of these progressions has to be made for two distinct environments: the existing environment (referred to as the baseline in the ADM) and the target environment. The architect must develop pertinent architecture views of both the Baseline Architecture and the Target Architecture. This provides the context for the gap analysis at the end of Phases B, C, and D of the ADM, which establishes the elements of the Baseline Architecture to be carried forward and the elements to be added, removed, or replaced.

This whole process is explained in Part III, 27. Gap Analysis.

As mentioned above, at the present time TOGAF encourages but does not mandate the use of ISO/IEC 42010:2007. The following description therefore covers both the situation where ISO/IEC 42010:2007 has been adopted and where it has not.

ISO/IEC 42010:2007 itself does not require any specific process for developing viewpoints or creating views from them. Where ISO/IEC 42010:2007 has been adopted and become well-established practice within an organization, it will often be possible to create the required views for a particular architecture by following these steps:

This approach can be expected to bring the following benefits:

However, situations can always arise in which a view is needed for which no appropriate viewpoint has been predefined. This is also the situation, of course, when an organization has not yet incorporated ISO/IEC 42010:2007 into its architecture practice and established a library of viewpoints.

In each case, the architect may choose to develop a new viewpoint that will cover the outstanding need, and then generate a view from it. (This is ISO/IEC 42010:2007 recommended practice.) Alternatively, a more pragmatic approach can be equally successful: the architect can create an ad hoc view for a specific system and later consider whether a generalized form of the implicit viewpoint should be defined explicitly and saved in a library, so that it can be re-used. (This is one way of establishing a library of viewpoints initially.)

Whatever the context, the architect should be aware that every view has a viewpoint, at least implicitly, and that defining the viewpoint in a systematic way (as recommended by ISO/IEC 42010:2007) will help in assessing its effectiveness; i.e., does the viewpoint cover the relevant stakeholder concerns?.

The need for architecture views, and the process of developing them following the ADM, are explained above. This section describes the relationships between architecture views, the tools used to develop and analyze them, and a standard language enabling interoperability between the tools.

In order to achieve the goals of completeness and integrity in an architecture, architecture views are usually developed, visualized, communicated, and managed using a tool.

In the current state of the market, different tools normally have to be used to develop and analyze different views of the architecture. It is highly desirable that an architecture description be encoded in a standard language, to enable a standard approach to the description of architecture semantics and their re-use among different tools.

A viewpoint is also normally developed, visualized, communicated, and managed using a tool, and it is also highly desirable that standard viewpoints (i.e., templates or schemas) be developed, so that different tools that deal in the same views can interoperate, the fundamental elements of an architecture can be re-used, and the architecture description can be shared among tools.

Issues relating to the evaluation of tools for architecture work are discussed in detail in Part V, 42. Tools for Architecture Development.

To illustrate the concepts of views and viewpoints, consider the example of a very simple airport system with two different stakeholders: the pilot and the air traffic controller.

One view can be developed from the viewpoint of the pilot, which addresses the pilot's concerns. Equally, another view can be developed from the viewpoint of the air traffic controller. Neither view completely describes the system in its entirety, because the viewpoint of each stakeholder constrains (and reduces) how each sees the overall system.

The viewpoint of the pilot comprises some concerns that are not relevant to the controller, such as passengers and fuel, while the viewpoint of the controller comprises some concerns not relevant to the pilot, such as other planes. There are also elements shared between the two viewpoints, such as the communication model between the pilot and the controller, and the vital information about the plane itself.

A viewpoint is a model (or description) of the information contained in a view. In our example, one viewpoint is the description of how the pilot sees the system, and the other viewpoint is how the controller sees the system.

Pilots describe the system from their perspective, using a model of their position and vector toward or away from the runway. All pilots use this model, and the model has a specific language that is used to capture information and populate the model.

Controllers describe the system differently, using a model of the airspace and the locations and vectors of aircraft within the airspace. Again, all controllers use a common language derived from the common model in order to capture and communicate information pertinent to their viewpoint.

Fortunately, when controllers talk with pilots, they use a common communication language. (In other words, the models representing their individual viewpoints partially intersect.) Part of this common language is about location and vectors of aircraft, and is essential to safety.

So in essence each viewpoint is an abstract model of how all the stakeholders of a particular type - all pilots, or all controllers - view the airport system.

Tools exist to assist stakeholders, especially when they are interacting with complex models such as the model of an airspace, or the model of air flight.

The interface to the human user of a tool is typically close to the model and language associated with the viewpoint. The unique tools of the pilot are fuel, altitude, speed, and location indicators. The main tool of the controller is radar. The common tool is a radio.

To summarize from the above example, we can see that a view can subset the system through the perspective of the stakeholder, such as the pilot versus the controller. This subset can be described by an abstract model called a viewpoint, such as an air flight versus an air space model. This description of the view is documented in a partially specialized language, such as "pilot-speak" versus "controller-speak". Tools are used to assist the stakeholders, and they interface with each other in terms of the language derived from the viewpoint ("pilot-speak" versus' "controller-speak").

When stakeholders use common tools, such as the radio contact between pilot and controller, a common language is essential.

Now let us map this example to the enterprise architecture. Consider two stakeholders in a new small computing system: the users and the developers.

The users of the system have a viewpoint that reflects their concerns when interacting with the system, and the developers of the system have a different viewpoint. Views that are developed to address either of the two viewpoints are unlikely to exhaustively describe the whole system, because each perspective reduces how each sees the system.

The viewpoint of the user is comprised of all the ways in which the user interacts with the system, not seeing any details such as applications or Database Management Systems (DBMS).

The viewpoint of the developer is one of productivity and tools, and doesn't include things such as actual live data and connections with consumers.

However, there are things that are shared, such as descriptions of the processes that are enabled by the system and/or communications protocols set up for users to communicate problems directly to development.

In this example, one viewpoint is the description of how the user sees the system, and the other viewpoint is how the developer sees the system. Users describe the system from their perspective, using a model of availability, response time, and access to information. All users of the system use this model, and the model has a specific language.

Developers describe the system differently than users, using a model of software connected to hardware distributed over a network, etc. However, there are many types of developers (database, security, etc.) of the system, and they do not have a common language derived from the model.

Tools exist for both users and developers. Tools such as online help are there specifically for users, and attempt to use the language of the user. Many different tools exist for different types of developers, but they suffer from the lack of a common language that is required to bring the system together. It is difficult, if not impossible, in the current state of the tools market to have one tool interoperate with another tool.

Issues relating to the evaluation of tools for architecture work are discussed in detail in Part V, 42. Tools for Architecture Development.

This section attempts to deal with views in a structured manner, but this is by no means a complete treatise on views.

In general, TOGAF embraces the concepts and definitions presented in ISO/IEC 42010:2007, specifically the concepts that help guide the development of a view and make the view actionable. These concepts can be summarized as:

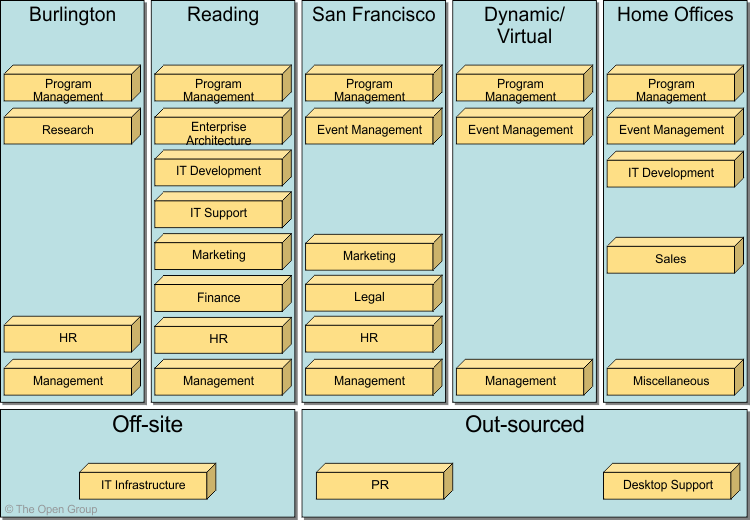

Figure 35-3 shows the artifacts that are associated with the core content metamodel and each of the content extensions.

The specific classes of artifact are as follows:

The recommended artifacts for production in each ADM phase are as follows.

The following describes catalogs, matrices, and diagrams that may be created within the Preliminary Phase, as listed in Part II, 6.5 Outputs.

The Principles catalog captures principles of the business and architecture principles that describe what a "good" solution or architecture should look like. Principles are used to evaluate and agree an outcome for architecture decision points. Principles are also used as a tool to assist in architectural governance of change initiatives.

The Principles catalog contains the following metamodel entities:

The following describes catalogs, matrices, and diagrams that may be created within Phase A (Architecture Vision) as listed in 7.5 Outputs.

The purpose of the Stakeholder Map matrix is to identify the stakeholders for the architecture engagement, their influence over the engagement, and their key questions, issues, or concerns that must be addressed by the architecture framework.

Understanding stakeholders and their requirements allows an architect to focus effort in areas that meet the needs of stakeholders (see Part III, 24. Stakeholder Management).

Due to the potentially sensitive nature of stakeholder mapping information and the fact that the Architecture Vision phase is intended to be conducted using informal modeling techniques, no specific metamodel entities will be used to generate a stakeholder map.

A Value Chain diagram provides a high-level orientation view of an enterprise and how it interacts with the outside world. In contrast to the more formal Functional Decomposition diagram developed within Phase B (Business Architecture), the Value Chain diagram focuses on presentational impact.

The purpose of this diagram is to quickly on-board and align stakeholders for a particular change initiative, so that all participants understand the high-level functional and organizational context of the architecture engagement.

A Solution Concept diagram provides a high-level orientation of the solution that is envisaged in order to meet the objectives of the architecture engagement. In contrast to the more formal and detailed architecture diagrams developed in the following phases, the solution concept represents a "pencil sketch" of the expected solution at the outset of the engagement.

This diagram may embody key objectives, requirements, and constraints for the engagement and also highlight work areas to be investigated in more detail with formal architecture modeling.

Its purpose is to quickly on-board and align stakeholders for a particular change initiative, so that all participants understand what the architecture engagement is seeking to achieve and how it is expected that a particular solution approach will meet the needs of the enterprise.

The following describes catalogs, matrices, and diagrams that may be created within Phase B (Business Architecture) as listed in 8.5 Outputs.

The purpose of the Organization/Actor catalog is to capture a definitive listing of all participants that interact with IT, including users and owners of IT systems.

The Organization/Actor catalog can be referenced when developing requirements in order to test for completeness.

For example, requirements for an application that services customers can be tested for completeness by verifying exactly which customer types need to be supported and whether there are any particular requirements or restrictions for user types.

The Organization/Actor catalog contains the following metamodel entities:

The purpose of the Driver/Goal/Objective catalog is to provide a cross-organizational reference of how an organization meets its drivers in practical terms through goals, objectives, and (optionally) measures.

Publishing a definitive breakdown of drivers, goals, and objectives allows change initiatives within the enterprise to identify synergies across the organization (e.g., multiple organizations attempting to achieve similar objectives), which in turn allow stakeholders to be identified and related change initiatives to be aligned or consolidated.

The Driver/Goal/Objective catalog contains the following metamodel entities:

The purpose of the Role catalog is to provide a listing of all authorization levels or zones within an enterprise. Frequently, application security or behavior is defined against locally understood concepts of authorization that create complex and unexpected consequences when combined on the user desktop.

If roles are defined, understood, and aligned across organizations and applications, this allows for a more seamless user experience and generally more secure applications, as administrators do not need to resort to workarounds in order to enable users to carry out their jobs.

In addition to supporting security definition for the enterprise, the Role catalog also forms a key input to identifying organizational change management impacts, defining job functions, and executing end-user training.

As each role implies access to a number of business functions, if any of these business functions are impacted, then change management will be required, organizational responsibilities may need to be redefined, and retraining may be needed.

The Role catalog contains the following metamodel entities:

The purpose of the Business Service/Function catalog is to provide a functional decomposition in a form that can be filtered, reported on, and queried, as a supplement to graphical Functional Decomposition diagrams.

The Business Service/Function catalog can be used to identify capabilities of an organization and to understand the level that governance is applied to the functions of an organization. This functional decomposition can be used to identify new capabilities required to support business change or may be used to determine the scope of change initiatives, applications, or technology components.

The Business Service/Function catalog contains the following metamodel entities:

The Location catalog provides a listing of all locations where an enterprise carries out business operations or houses architecturally relevant assets, such as data centers or end-user computing equipment.

Maintaining a definitive list of locations allows change initiatives to quickly define a location scope and to test for completeness when assessing current landscapes or proposed target solutions. For example, a project to upgrade desktop operating systems will need to identify all locations where desktop operating systems are deployed.

Similarly, when new systems are being implemented, a diagram of locations is essential in order to develop appropriate deployment strategies that comprehend both user and application location and identify location-related issues, such as internationalization, localization, timezone impacts on availability, distance impacts on latency, network impacts on bandwidth, and access.

The Location catalog contains the following metamodel entities:

The Process/Event/Control/Product catalog provides a hierarchy of processes, events that trigger processes, outputs from processes, and controls applied to the execution of processes. This catalog provides a supplement to any Process Flow diagrams that are created and allows an enterprise to filter, report, and query across organizations and processes to identify scope, commonality, or impact.

For example, the Process/Event/Control/Product catalog allows an enterprise to see relationships of processes to sub-processes in order to identify the full chain of impacts resulting from changing a high-level process.

The Process/Event/Control/Product catalog contains the following metamodel entities:

The Contract/Measure catalog provides a listing of all agreed service contracts and (optionally) the measures attached to those contracts. It forms the master list of service levels agreed to across the enterprise.

The Contract/Measure catalog contains the following metamodel entities:

The purpose of this matrix is to depict the relationship interactions between organizations and business functions across the enterprise.

Understanding business interaction of an enterprise is important as it helps to highlight value chain and dependencies across organizations.

The Business Interaction matrix shows the following metamodel entities and relationships:

The purpose of this matrix is to show which actors perform which roles, supporting definition of security and skills requirements.

Understanding Actor-to-Role relationships is a key supporting tool in definition of training needs, user security settings, and organizational change management.

The Actor/Role matrix shows the following metamodel entities and relationships:

A Business Footprint diagram describes the links between business goals, organizational units, business functions, and services, and maps these functions to the technical components delivering the required capability.

A Business Footprint diagram provides a clear traceability between a technical component and the business goal that it satisfies, while also demonstrating ownership of the services identified.

A Business Footprint diagram demonstrates only the key facts linking organization unit functions to delivery services and is utilized as a communication platform for senior-level (CxO) stakeholders.

The Business Service/Information diagram shows the information needed to support one or more business services. The Business Service/Information diagram shows what data is consumed by or produced by a business service and may also show the source of information.

The Business Service/Information diagram shows an initial representation of the information present within the architecture and therefore forms a basis for elaboration and refinement within Phase C (Data Architecture).

The purpose of the Functional Decomposition diagram is to show on a single page the capabilities of an organization that are relevant to the consideration of an architecture. By examining the capabilities of an organization from a functional perspective, it is possible to quickly develop models of what the organization does without being dragged into extended debate on how the organization does it.

Once a basic Functional Decomposition diagram has been developed, it becomes possible to layer heat-maps on top of this diagram to show scope and decisions. For example, the capabilities to be implemented in different phases of a change program.

The purpose of the Product Lifecycle diagram is to assist in understanding the lifecycles of key entities within the enterprise. Understanding product lifecycles is becoming increasingly important with respect to environmental concerns, legislation, and regulation where products must be tracked from manufacture to disposal. Equally, organizations that create products that involve personal or sensitive information must have a detailed understanding of the product lifecycle during the development of Business Architecture in order to ensure rigor in design of controls, processes, and procedures. Examples of this would include credit cards, debit cards, store/loyalty cards, smart cards, user identity credentials (identity cards, passports, etc.).

The purpose of a Goal/Objective/Service diagram is to define the ways in which a service contributes to the achievement of a business vision or strategy.

Services are associated with the drivers, goals, objectives, and measures that they support, allowing the enterprise to understand which services contribute to similar aspects of business performance. The Goal/Objective/Service diagram also provides qualitative input on what constitutes high performance for a particular service.

A Business Use-Case diagram displays the relationships between consumers and providers of business services. Business services are consumed by actors or other business services and the Business Use-Case diagram provides added richness in describing business capability by illustrating how and when that capability is used.

The purpose of the Business Use-Case diagram is to help to describe and validate the interaction between actors and their roles to processes and functions. As the architecture progresses, the use-case can evolve from the business level to include data, application, and technology details. Architectural business use-cases can also be re-used in systems design work.

An Organization Decomposition diagram describes the links between actor, roles, and location within an organization tree.

An organization map should provide a chain of command of owners and decision-makers in the organization. Although it is not the intent of the Organization Decomposition diagram to link goal to organization, it should be possible to intuitively link the goals to the stakeholders from the Organization Decomposition diagram.

The purpose of the Process Flow diagram is to depict all models and mappings related to the process metamodel entity.

Process Flow diagrams show sequential flow of control between activities and may utilize swim-lane techniques to represent ownership and realization of process steps. For example, the application that supports a process step may be shown as a swim-lane.

In addition to showing a sequence of activity, process flows can also be used to detail the controls that apply to a process, the events that trigger or result from completion of a process, and also the products that are generated from process execution.

Process Flow diagrams are useful in elaborating the architecture with subject specialists, as they allow the specialist to describe "how the job is done" for a particular function. Through this process, each process step can become a more fine-grained function and can then in turn be elaborated as a process.

The purpose of the Event diagram is to depict the relationship between events and process.

Certain events - such as arrival of certain information (e.g., customer submits sales order) or a certain point in time (e.g., end of fiscal quarter) - cause work and certain actions need to be undertaken within the business. These are often referred to as "business events" or simply "events" and are considered as triggers for a process. It is important to note that the event has to trigger a process and generate a business response or result.

The following describes catalogs, matrices, and diagrams that may be created within Phase C (Data Architecture) as listed in 10.5 Outputs.

The purpose of the Data Entity/Data Component catalog is to identify and maintain a list of all the data use across the enterprise, including data entities and also the data components where data entities are stored. An agreed Data Entity/Data Component catalog supports the definition and application of information management and data governance policies and also encourages effective data sharing and re-use.

The Data Entity/Data Component catalog contains the following metamodel entities:

The purpose of the Data Entity/Business Function matrix is to depict the relationship between data entities and business functions within the enterprise. Business functions are supported by business services with explicitly defined boundaries and will be supported and realized by business processes. The mapping of the Data Entity-Business Function relationship enables the following to take place:

The Data Entity/Business Function matrix shows the following entities and relationships:

The purpose of the Application/Data matrix is to depict the relationship between applications (i.e., application components) and the data entities that are accessed and updated by them.

Applications will create, read, update, and delete specific data entities that are associated with them. For example, a CRM application will create, read, update, and delete customer entity information.

The data entities in a package/packaged services environment can be classified as master data, reference data, transactional data, content data, and historical data. Applications that operate on the data entities include transactional applications, information management applications, and business warehouse applications.

The mapping of the Application Component-Data Entity relationship is an important step as it enables the following to take place:

The Application/Data matrix is a two-dimensional table with Logical Application Component on one axis and Data Entity on the other axis.

The key purpose of the Conceptual Data diagram is to depict the relationships between critical data entities within the enterprise. This diagram is developed to address the concerns of business stakeholders.

Techniques used include:

The key purpose of the Logical Data diagram is to show logical views of the relationships between critical data entities within the enterprise. This diagram is developed to address the concerns of:

The purpose of the Data Dissemination diagram is to show the relationship between data entity, business service, and application components. The diagram shows how the logical entities are to be physically realized by application components. This allows effective sizing to be carried out and the IT footprint to be refined. Moreover, by assigning business value to data, an indication of the business criticality of application components can be gained.

Additionally, the diagram may show data replication and application ownership of the master reference for data. In this instance, it can show two copies and the master-copy relationship between them. This diagram can include services; that is, services encapsulate data and they reside in an application, or services that reside on an application and access data encapsulated within the application.

Data is considered as an asset to the enterprise and data security simply means ensuring that enterprise data is not compromised and that access to it is suitably controlled.

The purpose of the Data Security diagram is to depict which actor (person, organization, or system) can access which enterprise data. This relationship can be shown in a matrix form between two objects or can be shown as a mapping.

The diagram can also be used to demonstrate compliance with data privacy laws and other applicable regulations (HIPAA, SOX, etc). This diagram should also consider any trust implications where an enterprise's partners or other parties may have access to the company's systems, such as an outsourced situation where information may be managed by other people and may even be hosted in a different country.

Data migration is critical when implementing a package or packaged service-based solution. This is particularly true when an existing legacy application is replaced with a package or an enterprise is to be migrated to a larger packages/packaged services footprint. Packages tend to have their own data model and during data migration the legacy application data may need to be transformed prior to loading into the package.

Data migration activities will usually involve the following steps:

The purpose of the Data Migration diagram is to show the flow of data from the source to the target applications. The diagram will provide a visual representation of the spread of sources/targets and serve as a tool for data auditing and establishing traceability. This diagram can be elaborated or enhanced as detailed as necessary. For example, the diagram can contain just an overall layout of migration landscape or could go into individual application metadata element level of detail.

The Data Lifecycle diagram is an essential part of managing business data throughout its lifecycle from conception until disposal within the constraints of the business process.

The data is considered as an entity in its own right, decoupled from business process and activity. Each change in state is represented on the diagram which may include the event or rules that trigger that change in state.

The separation of data from process allows common data requirements to be identified which enables resource sharing to be achieved more effectively.

The following describes catalogs, matrices, and diagrams that may be created within Phase C (Application Architecture) as listed in 11.5 Outputs.

The purpose of this catalog is to identify and maintain a list of all the applications in the enterprise. This list helps to define the horizontal scope of change initiatives that may impact particular kinds of applications. An agreed Application Portfolio allows a standard set of applications to be defined and governed.

The Application Portfolio catalog provides a foundation on which to base the remaining matrices and diagrams. It is typically the start point of the Application Architecture phase.

The Application Portfolio catalog contains the following metamodel entities:

The purpose of the Interface catalog is to scope and document the interfaces between applications to enable the overall dependencies between applications to be scoped as early as possible.

Applications will create, read, update, and delete data within other applications; this will be achieved by some kind of interface, whether via a batch file that is loaded periodically, a direct connection to another application's database, or via some form of API or web service.

The mapping of the Application Component-Application Component entity relationship is an important step as it enables the following to take place:

The Interface catalog contains the following metamodel entities:

The purpose of this matrix is to depict the relationship between applications and organizational units within the enterprise.

Business functions are performed by organizational units. Some of the functions and services performed by those organizational units will be supported by applications. The mapping of the Application Component-Organization Unit relationship is an important step as it enables the following to take place:

The Application/Organization matrix is a two-dimensional table with Logical/Physical Application Component on one axis and Organization Unit on the other axis.

The relationship between these two entities is a composite of a number of metamodel relationships that need validating:

The purpose of the Role/Application matrix is to depict the relationship between applications and the business roles that use them within the enterprise.

People in an organization interact with applications. During this interaction, these people assume a specific role to perform a task; for example, product buyer.

The mapping of the Application Component-Role relationship is an important step as it enables the following to take place:

The Role/Application matrix is a two-dimensional table with Logical Application Component on one axis and Role on the other axis.

The relationship between these two entities is a composite of a number of metamodel relationships that need validating:

The purpose of the Application/Function matrix is to depict the relationship between applications and business functions within the enterprise.

Business functions are performed by organizational units. Some of the business functions and services will be supported by applications. The mapping of the Application Component-Function relationship is an important step as it enables the following to take place:

The Application/Function matrix is a two-dimensional table with Logical Application Component on one axis and Function on the other axis.

The relationship between these two entities is a composite of a number of metamodel relationships that need validating:

The purpose of the Application Interaction matrix is to depict communications relationships between applications.

The mapping of the application interactions shows in matrix form the equivalent of the Interface Catalog or an Application Communication diagram.

The Application Interaction matrix is a two-dimensional table with Application Service, Logical Application Component, and Physical Application Component on both the rows and the columns of the table.

The relationships depicted by this matrix include:

The purpose of the Application Communication diagram is to depict all models and mappings related to communication between applications in the metamodel entity.

It shows application components and interfaces between components. Interfaces may be associated with data entities where appropriate. Applications may be associated with business services where appropriate. Communication should be logical and should only show intermediary technology where it is architecturally relevant.

The Application and User Location diagram shows the geographical distribution of applications. It can be used to show where applications are used by the end user; the distribution of where the host application is executed and/or delivered in thin client scenarios; the distribution of where applications are developed, tested, and released; etc.

Analysis can reveal opportunities for rationalization, as well as duplication and/or gaps.

The purpose of this diagram is to clearly depict the business locations from which business users typically interact with the applications, but also the hosting location of the application infrastructure.

The diagram enables:

Users typically interact with applications in a variety of ways; for example:

An Application Use-Case diagram displays the relationships between consumers and providers of application services. Application services are consumed by actors or other application services and the Application Use-Case diagram provides added richness in describing application functionality by illustrating how and when that functionality is used.

The purpose of the Application Use-Case diagram is to help to describe and validate the interaction between actors and their roles with applications. As the architecture progresses, the use-case can evolve from functional information to include technical realization detail.

Application use-cases can also be re-used in more detailed systems design work.

The Enterprise Manageability diagram shows how one or more applications interact with application and technology components that support operational management of a solution.

This diagram is really a filter on the Application Communication diagram, specifically for enterprise management class software.

Analysis can reveal duplication and gaps, and opportunities in the IT service management operation of an organization.

The purpose of the Process/Application Realization diagram is to clearly depict the sequence of events when multiple applications are involved in executing a business process.

It enhances the Application Communication diagram by augmenting it with any sequencing constraints, and hand-off points between batch and real-time processing.

It would identify complex sequences that could be simplified, and identify possible rationalization points in the architecture in order to provide more timely information to business users. It may also identify process efficiency improvements that may reduce interaction traffic between applications.

The Software Engineering diagram breaks applications into packages, modules, services, and operations from a development perspective.

It enables more detailed impact analysis when planning migration stages, and analyzing opportunities and solutions.

It is ideal for application development teams and application management teams when managing complex development environments.

The Application Migration diagram identifies application migration from baseline to target application components. It enables a more accurate estimation of migration costs by showing precisely which applications and interfaces need to be mapped between migration stages.

It would identify temporary applications, staging areas, and the infrastructure required to support migrations (for example, parallel run environments, etc).

The Software Distribution diagram shows how application software is structured and distributed across the estate. It is useful in systems upgrade or application consolidation projects.

This diagram shows how physical applications are distributed across physical technology and the location of that technology.

This enables a clear view of how the software is hosted, but also enables managed operations staff to understand how that application software is maintained once installed.

The following section describes catalogs, matrices, and diagrams that may be created within Phase D (Technology Architecture) as listed in 12.5 Outputs.

The Technology Standards catalog documents the agreed standards for technology across the enterprise covering technologies, and versions, the technology lifecycles, and the refresh cycles for the technology.

Depending upon the organization, this may also include location or business domain-specific standards information.

This catalog provides a snapshot of the enterprise standard technologies that are or can be deployed, and also helps identify the discrepancies across the enterprise.

If technology standards are currently in place, apply these to the Technology Portfolio catalog to gain a baseline view of compliance with technology standards.

The Technology Portfolio catalog contains the following metamodel entities:

The purpose of this catalog is to identify and maintain a list of all the technology in use across the enterprise, including hardware, infrastructure software, and application software. An agreed technology portfolio supports lifecycle management of technology products and versions and also forms the basis for definition of technology standards.

The Technology Portfolio catalog provides a foundation on which to base the remaining matrices and diagrams. It is typically the start point of the Technology Architecture phase.

Technology registries and repositories also provide input into this catalog from a baseline and target perspective.

Technologies in the catalog should be classified against the TOGAF Technology Reference Model (TRM) - see Part VI, 43. Foundation Architecture: Technical Reference Model - extending the model as necessary to fit the classification of technology products in use.

The Technology Portfolio catalog contains the following metamodel entities:

The Application/Technology matrix documents the mapping of applications to technology platform.

This matrix should be aligned with and complement one or more platform decomposition diagrams.

The Application/Technology matrix shows:

The Environments and Locations diagram depicts which locations host which applications, identifies what technologies and/or applications are used at which locations, and finally identifies the locations from which business users typically interact with the applications.

This diagram should also show the existence and location of different deployment environments, including non-production environments, such as development and pre production.

The Platform Decomposition diagram depicts the technology platform that supports the operations of the Information Systems Architecture. The diagram covers all aspects of the infrastructure platform and provides an overview of the enterprise's technology platform. The diagram can be expanded to map the technology platform to appropriate application components within a specific functional or process area. This diagram may show details of specification, such as product versions, number of CPUs, etc. or simply could be an informal "eye-chart" providing an overview of the technical environment.

The diagram should clearly show the enterprise applications and the technology platform for each application area can further be decomposed as follows:

Depending upon the scope of the enterprise architecture work, additional technology cross-platform information (e.g., communications, telco, and video information) may be addressed.

The Processing diagram focuses on deployable units of code/configuration and how these are deployed onto the technology platform. A deployment unit represents grouping of business function, service, or application components. The Processing diagram addresses the following:

The organization and grouping of deployment units depends on separation concerns of the presentation, business logic, and data store layers and service-level requirements of the components. For example, presentation layer deployment unit is grouped based on the following:

There are several considerations to determine how application components are grouped together. Each deployment unit is made up of sub-units, such as:

Finally, these deployment units are deployed on either dedicated or shared technology components (workstation, web server, application server, or database server, etc.). It is important to note that technology processing can influence and have implications on the services definition and granularity.

Starting with the transformation to client-server systems from mainframes and later with the advent of e-Business and J2EE, large enterprises moved predominantly into a highly network-based distributed network computing environment with firewalls and demilitarized zones. Currently, most of the applications have a web front-end and, looking at the deployment architecture of these applications, it is very common to find three distinct layers in the network landscape; namely a web presentation layer, an business logic or application layer, and a back-end data store layer. It is a common practice for applications to be deployed and hosted in a shared and common infrastructure environment.

So it becomes highly critical to document the mapping between logical applications and the technology components (e.g., server) that supports the application both in the development and production environments. The purpose of this diagram is to show the "as deployed" logical view of logical application components in a distributed network computing environment. The diagram is useful for the following reasons:

The scope of the diagram can be appropriately defined to cover a specific application, business function, or the entire enterprise. If chosen to be developed at the enterprise level, then the network computing landscape can be depicted in an application agnostic way as well.

The Communications Engineering diagram describes the means of communication - the method of sending and receiving information - between these assets in the Technology Architecture; insofar as the selection of package solutions in the preceding architectures put specific requirements on the communications between the applications.

The Communications Engineering diagram will take logical connections between client and server components and identify network boundaries and network infrastructure required to physically implement those connections. It does not describe the information format or content, but will address protocol and capacity issues.

The following section describes catalogs, matrices, and diagrams that may be created within Phase E (Opportunities & Solutions) as listed in 13.5 Outputs.

A Project Context diagram shows the scope of a work package to be implemented as a part of a broader transformation roadmap. The Project Context diagram links a work package to the organizations, functions, services, processes, applications, data, and technology that will be added, removed, or impacted by the project.

The Project Context diagram is also a valuable tool for project portfolio management and project mobilization.

The Benefits diagram shows opportunities identified in an architecture definition, classified according to their relative size, benefit, and complexity. This diagram can be used by stakeholders to make selection, prioritization, and sequencing decisions on identified opportunities.

The following section describes catalogs, matrices, and diagrams that may be created within the Requirements Management phase as listed in 17.5 Outputs.

The Requirements catalog captures things that the enterprise needs to do to meet its objectives. Requirements generated from architecture engagements are typically implemented through change initiatives identified and scoped during Phase E (Opportunities & Solutions). Requirements can also be used as a quality assurance tool to ensure that a particular architecture is fit-for-purpose (i.e., can the architecture meet all identified requirements).

The Requirements catalog contains the following metamodel entities:

Part III, 24. Stakeholder Management provides an outline of the major stakeholder groups that are typically encountered when developing enterprise architecture. The likely concerns of each stakeholder group are also identified together with relevant artifacts (catalogs, matrices, and diagrams).

The architecture views, and corresponding viewpoints, that may be created to support each of these stakeholders fall into the following categories:

In the following subsections TOGAF presents some recommended views, some or all of which may be appropriate in a particular architecture development. This is not intended as an exhaustive set of views, but simply as a starting point. Those described may be supplemented by additional views as required. This material should be considered as guides for the development and treatment of a view, not as a full definition of a viewpoint. The artifacts identified in 35.6 Architectural Artifacts by ADM Phase can be used to address specific concerns of the stakeholders, and in some instances the artifacts can be used with the view of the same name; for example, the Software Engineering diagram, Communications Engineering diagram, and Enterprise Manageability diagram.

Each subsection describes the stakeholders related to the view, their concerns, and the entities modeled and the language used to depict the view (the viewpoint). The viewpoint provides architecture concepts from the different perspectives, including components, interfaces, and allocation of services critical to the view. The viewpoint language, analytical methods, and modeling methods associated with views are typically applied with the use of appropriate tools.

The Business Architecture view is concerned with addressing the concerns of users.

This view should be developed for the users. It focuses on the functional aspects of the system from the perspective of the users of the system.

Addressing the concerns of the users includes consideration of the following:

Business scenarios (see Part III, 26. Business Scenarios and Business Goals) are an important technique that may be used prior to, and as a key input to, the development of the Business Architecture view, to help identify and understand business needs, and thereby to derive the business requirements and constraints that the architecture development has to address. Business scenarios are an extremely useful way to depict what should happen when planned and unplanned events occur. It is highly recommended that business scenarios be created for planned change, and for unplanned change.

The following section describe some of the key issues that the architect might consider when constructing business scenarios.

The Business Architecture view considers the functional aspects of the system; that is, what the new system is intended to do. This can be built up from an analysis of the existing environment and of the requirements and constraints affecting the new system.

The new requirements and constraints will appear from a number of sources, possibly including:

What should emerge from the Business Architecture view is a clear understanding of the functional requirements for the new architecture, with statements like: "Improvements in handling customer enquiries are required through wider use of computer/telephony integration".

The Business Architecture view considers the usability aspects of the system and its environment. It should also consider impacts on the user such as skill levels required, the need for specialized training, and migration from current practice. When considering usability the architect should take into account:

Note that, although security and management are thought about here, it is from a usability and functionality point of view. The technical aspects of security and management are considered in the Enterprise Security view (see 35.7.2 Developing an Enterprise Security View) and the Enterprise Manageability view (see 35.7.7 Developing an Enterprise Manageability View).

The Enterprise Security view is concerned with the security aspects of the system.

This view should be developed for security engineers of the system. It focuses on how the system is implemented from the perspective of security, and how security affects the system properties. It examines the system to establish what information is stored and processed, how valuable it is, what threats exist, and how they can be addressed.

Major concerns for this view are understanding how to ensure that the system is available to only those that have permission, and how to protect the system from unauthorized tampering.

The subjects of the general architecture of a "security system" are components that are secured, or components that provide security services. Additionally Access Control Lists (ACLs) and security schema definitions are used to model and implement security.

This section presents basic concepts required for an understanding of information system security.

The essence of security is the controlled use of information. The purpose of this section is to provide a brief overview of how security protection is implemented in the components of an information system. Doctrinal or procedural mechanisms, such as physical and personnel security procedures and policy, are not discussed here in any depth.

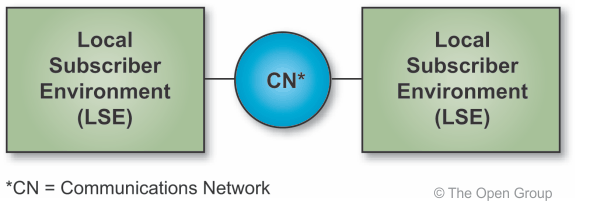

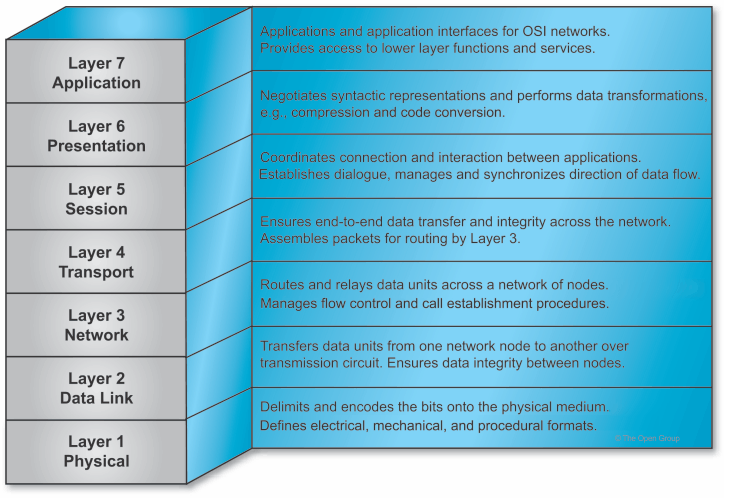

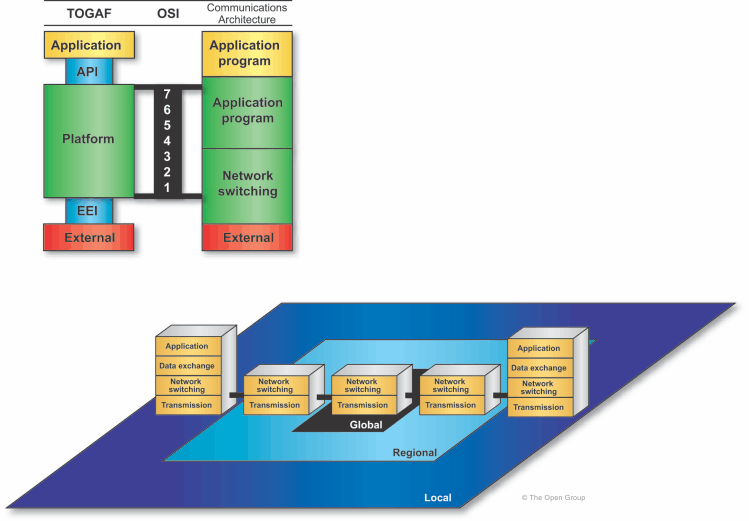

Figure 35-4 depicts an abstract view of an Information Systems Architecture, which emphasizes the fact that an information system from the security perspective is either part of a Local Subscriber Environment (LSE) or a Communications Network (CN). An LSE may be either fixed or mobile. The LSEs by definition are under the control of the using organization. In an open system distributed computing implementation, secure and non-secure LSEs will almost certainly be required to interoperate.

The concept of an information domain provides the basis for discussing security protection requirements. An information domain is defined as a set of users, their information objects, and a security policy. An information domain security policy is the statement of the criteria for membership in the information domain and the required protection of the information objects. Breaking an organization's information down into domains is the first step in reducing the task of security policy development to a manageable size.

The business of most organizations requires that their members operate in more than one information domain. The diversity of business activities and the variation in perception of threats to the security of information will result in the existence of different information domains within one organization security policy. A specific activity may use several information domains, each with its own distinct information domain security policy.

Information domains are not necessarily bounded by information systems or even networks of systems. The security mechanisms implemented in information system components may be evaluated for their ability to meet the information domain security policies.

Information domains can be viewed as being strictly isolated from one another. Information objects should be transferred between two information domains only in accordance with established rules, conditions, and procedures expressed in the security policy of each information domain.

The concept of "absolute protection" is used to achieve the same level of protection in all information systems supporting a particular information domain. It draws attention to the problems created by interconnecting LSEs that provide different strengths of security protection. This interconnection is likely because open systems may consist of an unknown number of heterogeneous LSEs. Analysis of minimum security requirements will ensure that the concept of absolute protection will be achieved for each information domain across LSEs.

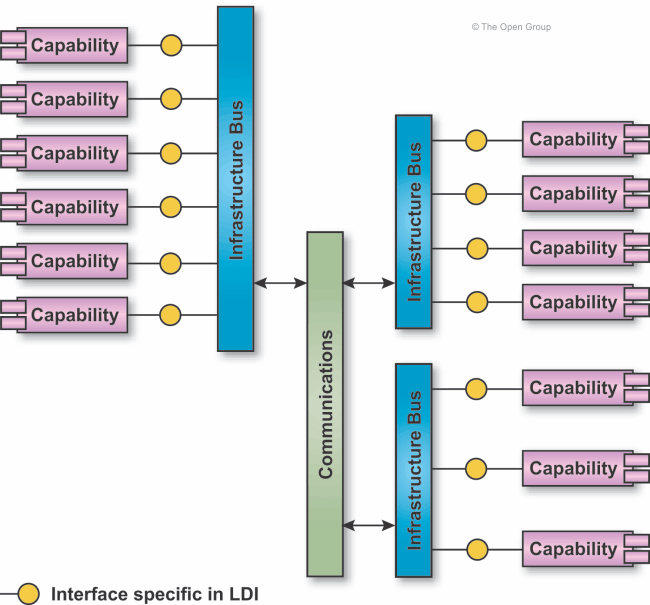

Figure 35-5 shows a generic architecture view which can be used to discuss the allocation of security services and the implementation of security mechanisms. This view identifies the architectural components within an LSE. The LSEs are connected by CNs. The LSEs include end systems, relay systems, and Local Communications Systems (LCSs), described below.

The end system and the relay system are viewed as requiring the same types of security protection. For this reason, a discussion of security protection in an end system generally also applies to a relay system. The security protections in an end system could occur in both the hardware and software.

Security protection of an information system is provided by mechanisms implemented in the hardware and software of the system and by the use of doctrinal mechanisms. The mechanisms implemented in the system hardware and software are concentrated in the end system or relay system. This focus for security protection is based on the open system, distributed computing approach for information systems. This implies use of commercial common carriers and private common-user communications systems as the CN provider between LSEs. Thus, for operation of end systems in a distributed environment, a greater degree of security protection can be ensured from implementation of mechanisms in the end system or relay system.

However, communications networks should satisfy the availability element of security in order to provide appropriate security protection for the information system. This means that CNs must provide an agreed level of responsiveness, continuity of service, and resistance to accidental and intentional threats to the communications service availability.

Implementing the necessary security protection in the end system occurs in three system service areas of TOGAF. They are operating system services, network services, and system management services.

Most of the implementation of security protection is expected to occur in software. The hardware is expected to protect the integrity of the end-system software. Hardware security mechanisms include protection against tampering, undesired emanations, and cryptography.

A "security context" is defined as a controlled process space subject to an information domain security policy. The security context is therefore analogous to a common operating system notion of user process space. Isolation of security contexts is required. Security contexts are required for all applications (e.g., end-user and security management applications). The focus is on strict isolation of information domains, management of end-system resources, and controlled sharing and transfer of information among information domains. Where possible, security-critical functions should be isolated into relatively small modules that are related in well-defined ways.

The operating system will isolate multiple security contexts from each other using hardware protection features (e.g., processor state register, memory mapping registers) to create separate address spaces for each of them. Untrusted software will use end-system resources only by invoking security-critical functions through the separation kernel. Most of the security-critical functions are the low-level functions of traditional operating systems.

Two basic classes of communications are envisioned for which distributed security contexts may need to be established. These are interactive and staged (store and forward) communications.

The concept of a "security association" forms an interactive distributed security context. A security association is defined as all the communication and security mechanisms and functions that extend the protections required by an information domain security policy within an end system to information in transfer between multiple end systems. The security association is an extension or expansion of an OSI application layer association. An application layer association is composed of appropriate application layer functions and protocols plus all of the underlying communications functions and protocols at other layers of the OSI model. Multiple security protocols may be included in a single security association to provide for a combination of security services.

For staged delivery communications (e.g., email), use will be made of an encapsulation technique (termed "wrapping process") to convey the necessary security attributes with the data being transferred as part of the network services. The wrapped security attributes are intended to permit the receiving end system to establish the necessary security context for processing the transferred data. If the wrapping process cannot provide all the necessary security protection, interactive security contexts between end systems will have to be used to ensure the secure staged transfer of information.

Security management is a particular instance of the general information system management functions discussed in earlier chapters. Information system security management services are concerned with the installation, maintenance, and enforcement of information domain and information system security policy rules in the information system intended to provide these security services. In particular, the security management function controls information needed by operating system services within the end system security architecture. In addition to these core services, security management requires event handling, auditing, and recovery. Standardization of security management functions, data structures, and protocols will enable interoperation of Security Management Application Processes (SMAPs) across many platforms in support of distributed security management.

The Software Engineering view is concerned with the development of new software systems.

Building a software-intensive system is both expensive and time-consuming. Because of this, it is necessary to establish guidelines to help minimize the effort required and the risks involved. This is the purpose of the Software Engineering view, which should be developed for the software engineers who are going to develop the system.

Major concerns for these stakeholders are:

There are many lifecycle models defined for software development (waterfall, prototyping, etc.). A consideration for the architect is how best to feed architectural decisions into the lifecycle model that is going to be used for development of the system.

As a piece of software grows in size, so the complexity and inter-dependencies between different parts of the code increase. Reliability will fall dramatically unless this complexity can be brought under control.

Modularity is a concept by which a piece of software is grouped into a number of distinct and logically cohesive sub-units, presenting services to the outside world through a well-defined interface. Generally speaking, the components of a module will share access to common data, and the interface will provide controlled access to this data. Using modularity, it becomes possible to build a software application incrementally on a reliable base of pre-tested code.

A further benefit of a well-defined modular system is that the modules defined within it may be re-used in the same or on other projects, cutting development time dramatically by reducing both development and testing effort.

In recent years, the development of object-oriented programming languages has greatly increased programming language support for module development and code re-use. Such languages allow the developer to define "classes" (a unit of modularity) of objects that behave in a controlled and well-defined manner. Techniques such as inheritance - which enables parts of an existing interface to an object to be changed - enhance the potential for re-usability by allowing predefined classes to be tailored or extended when the services they offer do not quite meet the requirement of the developer.

If modularity and software re-use are likely to be key objectives of new software developments, consideration must be given to whether the component parts of any proposed architecture may facilitate or prohibit the desired level of modularity in the appropriate areas.

Software portability - the ability to take a piece of software written in one environment and make it run in another - is important in many projects, especially product developments. It requires that all software and hardware aspects of a chosen Technology Architecture (not just the newly developed application) be available on the new platform. It will, therefore, be necessary to ensure that the component parts of any chosen architecture are available across all the appropriate target platforms.

Interoperability is always required between the component parts of a new architecture. It may also, however, be required between a new architecture and parts of an existing legacy system; for example, during the staggered replacement of an old system. Interoperability between the new and old architectures may, therefore, be a factor in architectural choice.

This view considers two general categories of software systems. First, there are those systems that require only a user interface to a database, requiring little or no business logic built into the software. These systems can be called "data-intensive". Second, there are those systems that require users to manipulate information that might be distributed across multiple databases, and to do this manipulation according to a predefined business logic. These systems can be called "information-intensive"

Data-intensive systems can be built with reasonable ease through the use of 4GL tools. In these systems, the business logic is in the mind of the user; i.e., the user understands the rules for manipulating the data and uses those rules while doing his work.

Information-intensive systems are different. Information is defined as "meaningful data"; i.e., data in a context that includes business logic. Information is different from data. Data is the tokens that are stored in databases or other data stores. Information is multiple tokens of data combined to convey a message. For example, "3" is data, but "3 widgets" is information. Typically, information reflects a model. Information-intensive systems also tend to require information from other systems and, if this path of information passing is automated, usually some mediation is required to convert the format of incoming information into a format that can be locally used. Because of this, information-intensive systems tend to be more complex than others, and require the most effort to build, integrate, and maintain.

This view is concerned primarily with information-intensive systems. In addition to building systems that can manage information, though, systems should also be as flexible as possible. This has a number of benefits. It allows the system to be used in different environments; for example, the same system should be usable with different sources of data, even if the new data store is a different configuration. Similarly, it might make sense to use the same functionality but with users who need a different user interface. So information systems should be built so that they can be reconfigured with different data stores or different user interfaces. If a system is built to allow this, it enables the enterprise to re-use parts (or components) of one system in another.

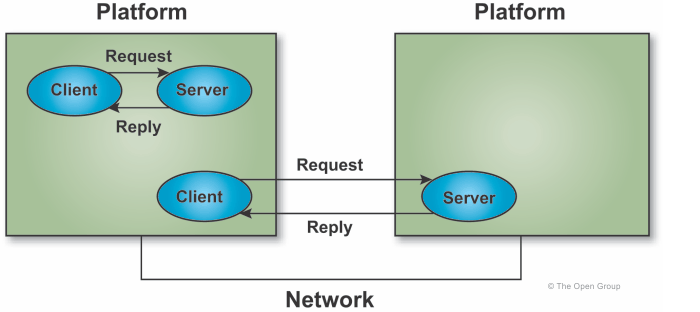

The word "interoperate" implies that one processing system performs an operation on behalf of or at the behest of another processing system. In practice, the request is a complete sentence containing a verb (operation) and one or more nouns (identities of resources, where the resources can be information, data, physical devices, etc.). Interoperability comes from shared functionality.

Interoperability can only be achieved when information is passed, not when data is passed. Most information systems today get information both from their own data stores and other information systems. In some cases the web of connectivity between information systems is quite extensive. The US Air Force, for example, has a concept known as "A5 Interoperability". This means that the required data is available Anytime, Anywhere, by Anyone, who is Authorized, in Any way. This requires that many information systems are architecturally linked and provide information to each other.

There must be some kind of physical connectivity between the systems. This might be a Local Area Network (LAN), a Wide Area Network (WAN), or, in some cases, it might simply be the passing data storage media between systems. Assuming a network connects the systems, there must be agreement on the protocols used. This enables the transfer of bits.

When the bits are assembled at the receiving system, they must be placed in the context that the receiving system needs. In other words, both the source and destination systems must agree on an information model. The source system uses this model to convert its information into data to be passed, and the destination system uses this same model to convert the received data into information it can use.

This usually requires an agreement between the architects and designers of the two systems. In the past, this agreement was often documented in the form of an Interface Control Document (ICD). The ICD defines the exact syntax and semantics that the sending system will use so that the receiving system will know what to do when the data arrives. The biggest problem with ICDs is that they tend to be unique solutions between two systems. If a given system must share information with n other systems, there is the potential need for n2 ICDs. This extremely tight integration prohibits flexibility and the ability of a system to adapt to a changing environment. Maintaining all these ICDs is also a challenge.

New technology, such as eXtensible Markup Language (XML), has the promise of making data "self describing". Use of new technologies such as XML, once they become reliable and well documented, might eliminate the need for an ICD. Further, there would be Commercial Off-The-Shelf (COTS) products available to parse and manipulate the XML data, eliminating the need to develop these products in-house. It should also ease the pain of maintaining all the interfaces.

Another approach is to build "mediators" between the systems. Mediators would use metadata that is sent with the data to understand the syntax and semantics of the data and convert it into a format usable by the receiving system. However, mediators do require that well-formed metadata be sent, adding to the complexity of the interface.

Typically, software architectures are either two-tier or three-tier.2

Each tier typically presents at least one capability.

In a two-tier architecture, the user interface and business logic are tightly coupled while the data is kept independent. This gives the advantage of allowing the data to reside on a dedicated data server. It also allows the data to be independently maintained. The tight coupling of the user interface and business logic ensure that they will work well together, for this problem in this domain. However, the tight coupling of the user interface and business logic dramatically increases maintainability risks while reducing flexibility and opportunities for re-use.

A three-tier approach adds a tier that separates the business logic from the user interface. This in principle allows the business logic to be used with different user interfaces as well as with different data stores. With respect to the use of different user interfaces, users might want the same user interface but using different COTS presentation servers; for example, Java Virtual Machine (JVM). Similarly, if the business logic is to be used with different data stores, then each data store must use the same data model3 (data standardization), or a mediation tier must be added above the data store (data encapsulation).

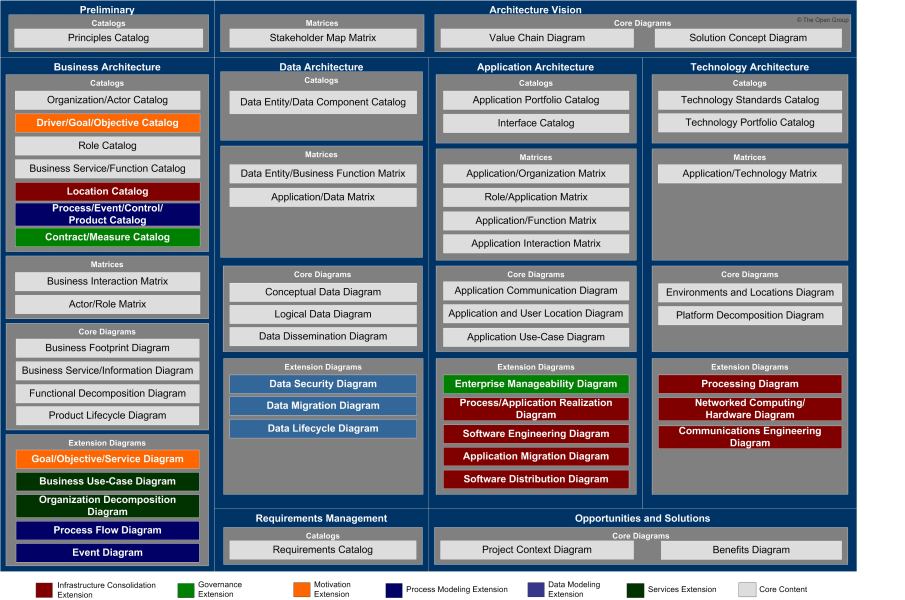

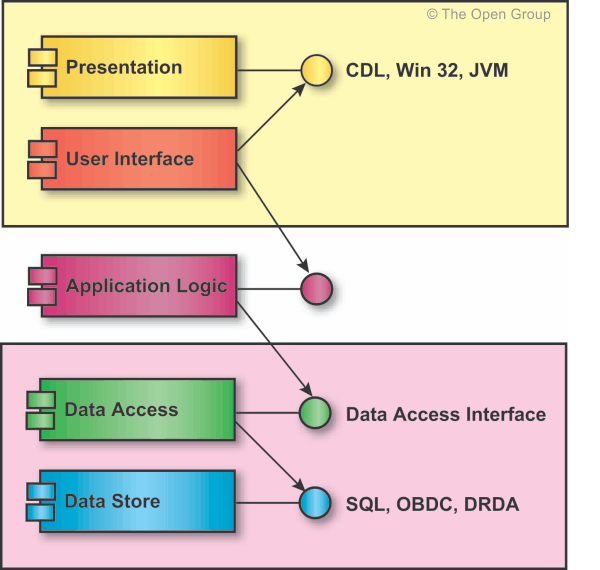

To achieve maximum flexibility, software should utilize a five-tier scheme for software which extends the three-tier paradigm (see Figure 35-6). The scheme is intended to provide strong separation of the three major functional areas of the architecture. Since there are client and server aspects of both the user interface and the data store, the scheme then has five tiers.4

The presentation tier is typically COTS-based. The presentation interface might be an X Server, Win32, etc. There should be a separate tier for the user interface client. This client establishes the look-and-feel of the interface; the server (presentation tier) actually performs the tasks by manipulating the display. The user interface client hides the presentation server from the application business logic.

The application business logic (e.g., a scheduling engine) should be a separate tier. This tier is called the "application logic" and functions as a server for the user interface client. It interfaces to the user interface typically through callbacks. The application logic tier also functions as a client to the data access tier.

If there is a user need to use an application with multiple databases with different schema, then a separate tier is needed for data access. This client would access the data stores using the appropriate COTS interface5 and then convert the raw data into an abstract data type representing parts of the information model. The interface into this object network would then provide a generalized Data Access Interface (DAI) which would hide the storage details of the data from any application that uses that data.

Each tier in this scheme can have zero or more components. The organization of the components within a tier is flexible and can reflect a number of different architectures based on need. For example, there might be many different components in the application logic tier (scheduling, accounting, inventory control, etc.) and the relationship between them can reflect whatever architecture makes sense, but none of them should be a client to the presentation server.

This clean separation of user interface, business logic, and information will result in maximum flexibility and componentized software that lends itself to product line development practices. For example, it is conceivable that the same functionality should be built once and yet be usable by different presentation servers (e.g., on PCs or UNIX system boxes), displayed with different looks and feels depending on user needs, and usable with multiple legacy databases. Moreover, this flexibility should not require massive rewrites to the software whenever a change is needed.

The data access tier provides a standardized view of certain classes of data, and as such functions as a server to one or more application logic tiers. If implemented correctly, there would be no need for application code to "know" about the implementation details of the data. The application code would only have to know about an interface that presents a level of abstraction higher than the data. This interface is called the Data Access Interface (DAI).