Digital Infrastructure

Area Description

A Digital Practitioner cannot start developing a product until deciding what it will be built with. They also need to understand something of how computers are operated, enough to make decisions on how the system will run. Most startups choose to run IT services on infrastructure owned by a cloud computing provider, but there are other options. As the product scales up, the practitioner will need to be more and more sophisticated in their understanding of its underlying IT services. Finally, developing deep skills in configuring the base platform is one of the most important capabilities for the practitioner.

Computing and Information Principles

Description

“Information Technology” (IT) is ultimately based on the work of Claude Shannon, Alan Turing, Alonzo Church, John von Neumann, and the other pioneers who defined the central problems of information theory, digital logic, computability, and computer architecture.

Pragmatically, there are three major physical aspects to “IT infrastructure” relevant to the practitioner:

-

Computing cycles (sometimes called just “compute”)

-

Memory and storage (or “storage”)

-

Networking and communications (or “network”)

Compute

Compute is the resource that performs the rapid, clock-driven digital logic that transforms data inputs to outputs.

Software is the thing that structures the logic and reasoning of the “compute” and allows for the dynamic use of inputs to vary the output following the logic and reasoning laid down by the software developer. While the computers process instructions at the level of “true” and “false”, represented as binary “1s” and “0s”, because humans cannot easily understand binary data and processing, higher-level abstractions of machine code and programming languages are used.

It is critical to understand that computers, traditionally understood, can only operate in precise, "either-or" ways. Computers are often used to automate business processes, but in order to do so, the process needs to be carefully defined, with no ambiguity. Complications and nuances, intuitive understandings, judgment calls — in general, computers can’t do any of this, unless and until you program them to — at which point the logic is no longer intuitive or a judgment call.

Creating programs for a specific functionality is challenging in two different ways:

-

Understanding the desired functionality, logic, and reasoning of the intended program takes skill as does the implementation of that reasoning into software and requires much testing and validation

-

The software programming languages, designs, and methods used can be flawed and unable to withstand the intended volume of data, user interactions, malicious inputs, or careless inputs, and testing for these must also be done, known as abuse "case testing"

Computer processing is not free. Moving data from one point to another — the fundamental transmission of information — requires matter and energy, and is bound up in physical reality and the laws of thermodynamics. The same applies for changing the state of data, which usually involves moving it somewhere, operating on it, and returning it to its original location. In the real world, even running the simplest calculation has physical and therefore economic cost, and so we must pay for computing.

Storage

Storage is the act of computation that is bound up with the concept of state, but they are also distinct. Computation is a process; state is a condition. Many technologies have been used for digital storage [71]. Increasingly, the IT professional need not be concerned with the physical infrastructure used for storing data. Storage increasingly is experienced as a virtual resource, accessed through executing programmed logic on cloud platforms. “Underneath the covers” the cloud provider might be using various forms of storage, from Random Access Memory (RAM) to solid state drives to tapes, but the end user is, ideally, shielded from the implementation details (part of the definition of a service).

In general, storage follows a hierarchy. Just as we might “store” a document by holding it in our hands, setting it on a desktop, filing it in a cabinet, or archiving it in a banker’s box in an offsite warehouse, so computer storage also has different levels of speed and accessibility:

-

On-chip registers and cache

-

Random Access Memory (RAM), aka “main memory”

-

Online mass storage, often “disk”

-

Offline mass storage; e.g., “tape”

Networking

With a computing process, one can change the state of some data, store it, or move it. The last is the basic concern of networking, to transmit data (or information) from one location to another. We see evidence of networking every day; coaxial cables for cable TV, or telephone lines strung from pole to pole in many areas. However, like storage, there is also a hierarchy of networking:

-

Intra-chip pathways

-

Motherboard and backplane circuits

Like storage and compute, networking as a service increasingly is independent of implementation. The developer uses programmatic tools to define expected information transmission, and (ideally) need not be concerned with the specific networking technologies or architectures serving their needs.

Evidence of Notability

-

Body of computer science and information theory (Church/Turing/Shannon et al.)

-

Basic IT curricula guidance and textbooks

Limitations

-

Quantum computing

-

Computing where mechanisms become opaque (e.g., neural nets) and therefore appear to be non-deterministic

Related Topics

Virtualization

Virtualization Basics

Description

Assume a simple, physical computer such as a laptop. When the laptop is first turned on, the OS loads; the OS is itself software, but is able to directly control the computer’s physical resources: its Central Processing Unit (CPU), memory, screen, and any interfaces such as WiFi, USB, and Bluetooth. The OS (in a traditional approach) then is used to run “applications” such as web browsers, media players, word processors, spreadsheets, and the like. Many such programs can also be run as applications within the browser, but the browser still needs to be run as an application.

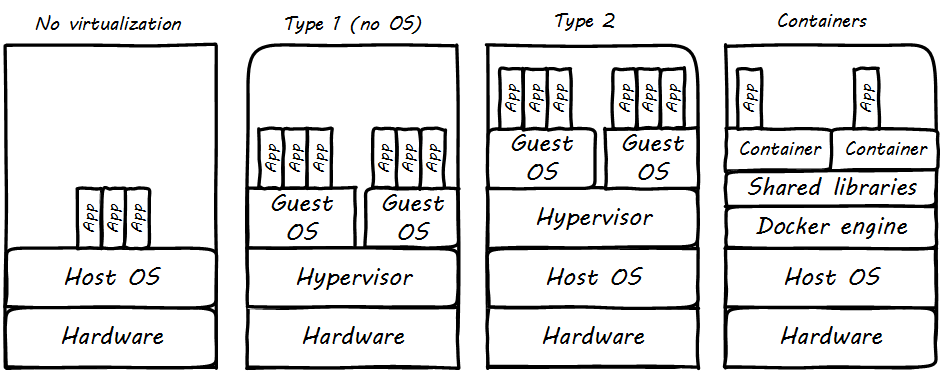

In the simplest form of virtualization, a specialized application known as a hypervisor is loaded like any other application. The purpose of this hypervisor is to emulate the hardware computer in software. Once the hypervisor is running, it can emulate any number of “virtual” computers, each of which can have its own OS (see Virtualization is Computers within a Computer). The hypervisor mediates the "virtual machine" access to the actual, physical hardware of the laptop; the virtual machine can take input from the USB port, and output to the Bluetooth interface, just like the master OS that launched when the laptop was turned on.

There are two different kinds of hypervisors. The example we just discussed was an example of a Type 2 hypervisor, which runs on top of a host OS. In a Type 1 hypervisor, a master host OS is not used; the hypervisor runs on the “bare metal” of the computer and in turn “hosts” multiple virtual machines.

Paravirtualization, e.g., containers, is another form of virtualization found in the marketplace. In a paravirtualized environment, a core OS is able to abstract hardware resources for multiple virtual guest environments without having to virtualize hardware for each guest. The benefit of this type of virtualization is increased Input/Output (I/O) efficiency and performance for each of the guest environments.

However, while hypervisors can support a diverse array of virtual machines with different OSs on a single computing node, guest environments in a paravirtualized system generally share a single OS. See Virtualization Types for an overview of all the types.

Virtualization and Efficiency

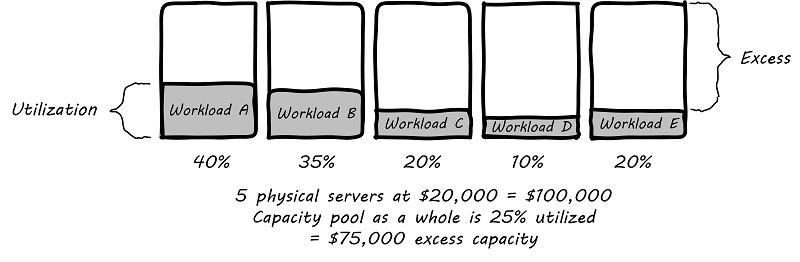

Virtualization attracted business attention as a means to consolidate computing workloads. For years, companies would purchase servers to run applications of various sizes, and in many cases the computers were badly underutilized. Because of configuration issues and (arguably) an overabundance of caution, average utilization in a pre-virtualization data center might average 10-20%. That’s up to 90% of the computer’s capacity being wasted (see Inefficient Utilization).

The above figure is a simplification. Computing and storage infrastructure supporting each application stack in the business were sized to support each workload. For example, a payroll server might run on a different infrastructure configuration than a Data Warehouse (DW) server. Large enterprises needed to support hundreds of different infrastructure configurations, increasing maintenance and support costs.

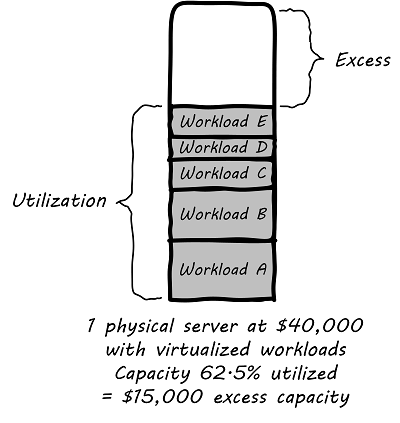

The adoption of virtualization allowed businesses to compress multiple application workloads onto a smaller number of physical servers (see Efficiency through Virtualization).

| For illustration only. A utilization of 62.5% might actually be a bit too high for comfort, depending on the variability and criticality of the workloads. |

In most virtualized architectures, the physical servers supporting workloads share a consistent configuration, which makes it easy to add and remove resources from the environment. The virtual machines may still vary greatly in configuration, but the fact of virtualization makes managing that easier — the virtual machines can be easily copied and moved, and increasingly can be defined as a form of code.

Virtualization thus introduced a new design pattern into the enterprise where computing and storage infrastructure became commoditized building blocks supporting an ever-increasing array of services. But what about where the application is large and virtualization is mostly overhead? Virtualization still may make sense in terms of management consistency and ease of system recovery.

Container Management and Kubernetes

Containers (paravirtualization) have emerged as a powerful and convenient technology for managing various workloads. Architectures based on containers running in Cloud platforms, with strong API provisioning and integrated support for load balancing and autoscaling, are called "cloud-native". The perceived need for a standardized control plane for containers resulted in various initiatives in the 2010s: Docker Swarm, Apache Mesos®, and (emerging as the de facto standard), the Cloud Native Computing Foundation’s Kubernetes.

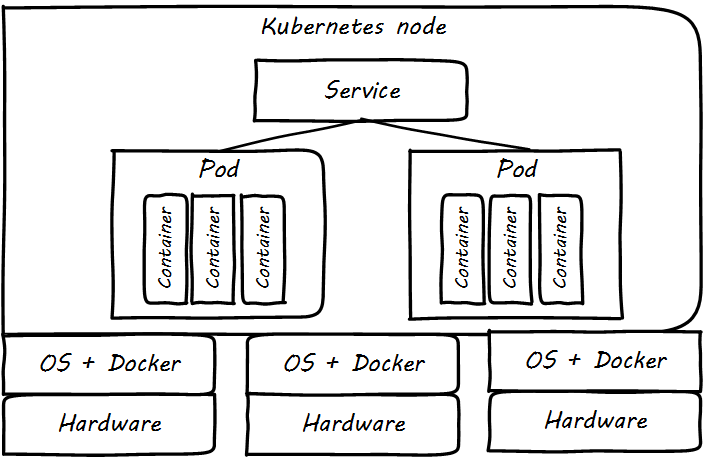

Kubernetes is an open source orchestration platform based on the following primitives (see Kubernetes Infrastructure, Pods, and Services):

-

Pods: group containers

-

Services: a set of pods supporting a common set of functionality

-

Volumes: define persistent storage coupled to the lifetime of pods (therefore lasting across container lifetimes, which can be quite brief)

-

Namespaces: in Kubernetes (as in computing generally) provide mutually-exclusive labeling to partition resources

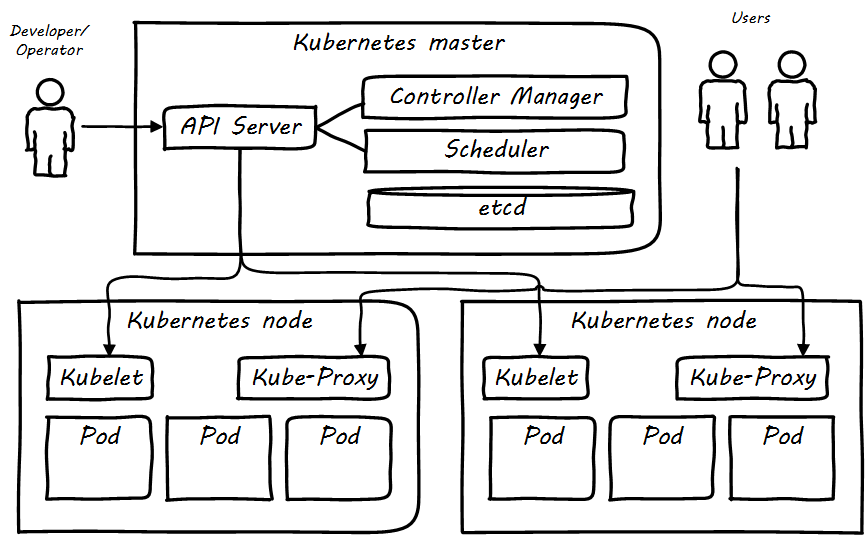

Kubernetes management (see Kubernetes Cluster Architecture) is performed via a Master controller which supervisees the nodes. This consists of:

-

API server: the primary communication point for provisioning and control requests

-

Controller manager: implements declarative functionality, in which the state of the cluster is managed against policies; the controller seeks to continually converge the actual state of the cluster with the intended (policy-specified) state (see Imperative and Declarative)

-

Scheduler: manages the supply of computing resources to the stated (policy-drive) demand

The nodes run:

-

Kubelet for managing nodes and containers

-

Kube-proxy for services and traffic management

Much other additional functionality is available and under development; the Kubernetes ecosystem as of 2019 is growing rapidly.

Graphics similar to those presented in [305+]+.

Competency Category "Virtualization" Example Competencies

-

Install and configure a virtual machine

-

Configure several virtual machines to communicate with each other

Evidence of Notability

Virtualization was predicted in the earliest theories that led to the development of computers. Turing and Church realized that any general-purpose computer could emulate any other. Virtual systems have existed in some form since at latest 1967 — only 20 years after the first fully functional computers.

Virtualization is discussed extensively in core computer science and engineering texts and is an essential foundation of cloud computing.

The Cloud-Native community is at this writing (2019) one of the most active communities in computing.

Limitations

Virtualization is mainly relevant to production computing. It is less relevant to edge devices.

Related Topics

Cloud Services

Description

Companies have always sought alternatives to owning their own computers. There is a long tradition of managed services, where applications are built out by a customer and then their management is outsourced to a third party. Using fractions of mainframe “time-sharing” systems is a practice that dates back decades. However, such relationships took effort to set up and manage, and might even require bringing physical tapes to the third party (sometimes called a “service bureau”). Fixed-price commitments were usually high (the customer had to guarantee to spend X dollars). Such relationships left much to be desired in terms of responsiveness to change.

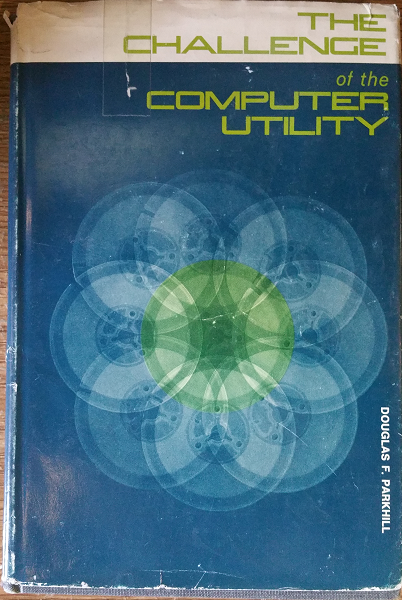

As computers became cheaper, companies increasingly acquired their own data centers, investing large amounts of capital in high-technology spaces with extensive power and cooling infrastructure. This was the trend through the late 1980s to about 2010, when cloud computing started to provide a realistic alternative with true “pay as you go” pricing, analogous to electric metering.

The idea of running IT completely as a utility service goes back at least to 1965 and the publication of The Challenge of the Computer Utility, by Douglas Parkhill (see Initial Statement of Cloud Computing). While the conceptual idea of cloud and utility computing was foreseeable 50 years ago, it took many years of hard-won IT evolution to support the vision. Reliable hardware of exponentially increasing performance, robust open-source software, Internet backbones of massive speed and capacity, and many other factors converged towards this end.

However, people store data — often private — on computers. In order to deliver compute as a utility, it is essential to segregate each customer’s workload from all others. This is called multi-tenancy. In multi-tenancy, multiple customers share physical resources that provide the illusion of being dedicated.

| The phone system has been multi-tenant ever since they got rid of party lines. A party line was a shared line where anyone on it could hear every other person. |

In order to run compute as a utility, multi-tenancy was essential. This is different from electricity (but similar to the phone system). As noted elsewhere, one watt of electric power is like any other and there is less concern for information leakage or unexpected interactions. People’s bank balances are not encoded somehow into the power generation and distribution infrastructure.

Virtualization is necessary, but not sufficient for cloud. True cloud services are highly automated, and most cloud analysts will insist that if virtual machines cannot be created and configured in a completely automated fashion, the service is not true cloud. This is currently where many in-house “private” cloud efforts struggle; they may have virtualization, but struggle to make it fully self-service.

Cloud services have refined into at least three major models:

-

Software as a Service (SaaS)

-

Platform as a Service (PaaS)

-

Infrastructure as a Service (IaaS)

There are cloud services beyond those listed above (e.g., Storage as a Service). Various platform services have become extensive on providers such as Amazon™, which offers load balancing, development pipelines, various kinds of storage, and much more.

Evidence of Notability

Cloud computing is one of the most economically active sectors in IT. Cloud computing has attracted attention from the US National Institute for Standards and Technology (NIST) [209]. Cloud law is becoming more well defined [196].

Limitations

The future of cloud computing appears assured, but computing and digital competencies also extend to edge devices and in-house computing. The extent to which organizations will retain in-house computing is a topic of industry debate.

Related Topics

Configuration Management and Infrastructure as Code

Description

This section covers:

-

Version control

-

Source control

-

Package management

-

Deployment management

-

Configuration management

Managing Infrastructure

Two computers may both run the same version of an OS, and yet exhibit vastly different behaviors. This is due to how they are configured. One may have web serving software installed; the other may run a database. One may be accessible to the public via the Internet; access to the other may be tightly restricted to an internal network. The parameters and options for configuring general-purpose computers are effectively infinite. Mis-configurations are a common cause of outages and other issues.

In years past, infrastructure administrators relied on the ad hoc issuance of commands either at an operations console or via a GUI-based application. Such commands could also be listed in text files; i.e., "batch files" or "shell scripts" to be used for various repetitive processes, but systems administrators by tradition and culture were empowered to issue arbitrary commands to alter the state of the running system directly.

However, it is becoming more and more rare for a systems administrator to actually “log in” to a server and execute configuration-changing commands in an ad hoc manner. Increasingly, all actual server configuration is based on pre-developed specification.

Because virtualization is becoming so powerful, servers increasingly are destroyed and rebuilt at the first sign of any trouble. In this way, it is certain that the server’s configuration is as intended. This again is a relatively new practice.

Previously, because of the expense and complexity of bare-metal servers, and the cost of having them offline, great pains were taken to fix troubled servers. Systems administrators would spend hours or days troubleshooting obscure configuration problems, such as residual settings left by removed software. Certain servers might start to develop “personalities”. Industry practice has changed dramatically here since around 2010.

As cloud infrastructures have scaled, there has been an increasing need to configure many servers identically. Auto-scaling (adding more servers in response to increasing load) has become a widely used strategy as well. Both call for increased automation in the provisioning of IT infrastructure. It is simply not possible for a human being to be hands on at all times in configuring and enabling such infrastructures, so automation is called for.

Sophisticated Infrastructure as Code techniques are an essential part of modern SRE practices such as those used by Google®. Auto-scaling, self-healing systems, and fast deployments of new features all require that infrastructure be represented as code for maximum speed and reliability of creation.

Infrastructure as Code is defined by Morris as: an approach to infrastructure automation based on practices from software development. It emphasizes consistent, repeatable routines for provisioning and changing systems and their configuration. Changes are made to definitions and then rolled out to systems through unattended processes that include thorough validation. [203+]+

Infrastructure as Code

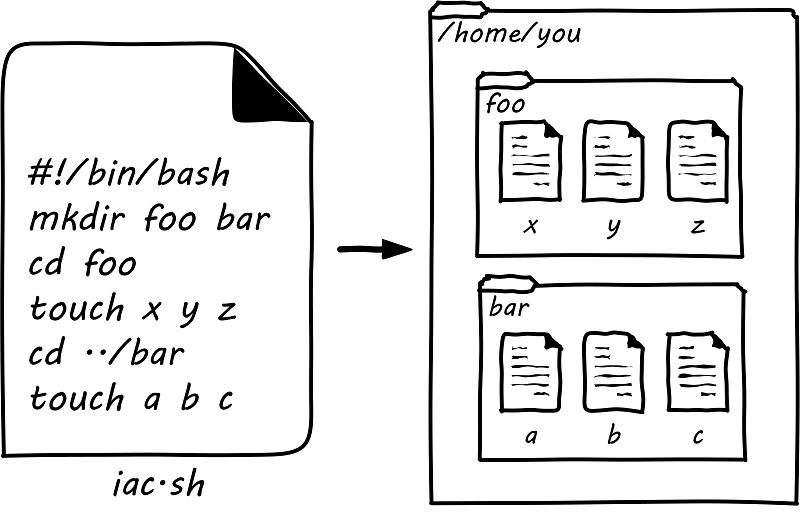

In presenting Infrastructure as Code at its simplest, we will start with the concept of a shell script. Consider the following set of commands:

$ mkdir foo bar $ cd foo $ touch x y z $ cd ../bar $ touch a b c

What does this do? It tells the computer:

-

Create (

mkdir) two directories, one named foo and one named bar -

Move (

cd) to the one named foo -

Create (

touch) three files, named x, y, and z -

Move to the directory named bar

-

Create three blank files, named a, b, and c

A user with the appropriate permissions at a UNIX® or Linux® command prompt who runs those commands will wind up with a configuration that could be visualized as in Simple Directory/File Structure Script. Directory and file layouts count as configuration and in some cases are critical.

Assume further that the same set of commands is entered into a text file thus:

#!/bin/bash mkdir foo bar cd foo touch x y z cd ../bar touch a b c

The file might be named iac.sh, and with its permissions set correctly, it could be run so that the computer executes all the commands, rather than a person running them one at a time at the console. If we did so in an empty directory, we would again wind up with that same configuration.

Beyond creating directories and files shell scripts can create and destroy virtual servers and containers, install and remove software, set up and delete users, check on the status of running processes, and much more.

| The state of the art in infrastructure configuration is not to use shell scripts at all but either policy-based infrastructure management or container definition approaches. Modern practice in cloud environments is to use templating capabilities such as Amazon CloudFormation or Hashicorp Terraform (which is emerging as a de facto platform-independent standard for cloud provisioning). |

Version Control

Consider again the iac.sh file. It is valuable. It documents intentions for how a given configuration should look. It can be run reliably on thousands of machines, and it will always give us two directories and six files. In terms of the previous section, we might choose to run it on every new server we create. Perhaps it should be established it as a known resource in our technical ecosystem. This is where version control and the broader concept of configuration management come in.

For example, a configuration file may be developed specifying the capacity of a virtual server, and what software is to be installed on it. This artifact can be checked into version control and used to re-create an equivalent server on-demand.

Tracking and controlling such work products as they evolve through change after change is important for companies of any size. The practice applies to computer code, configurations, and, increasingly, documentation, which is often written in a lightweight markup language like Markdown or Asciidoc. In terms of infrastructure, configuration management requires three capabilities:

-

The ability to backup or archive a system’s operational state (in general, not including the data it is processing — that is a different concern); taking the backup should not require taking the system down

-

The ability to compare two versions of the system’s state and identify differences

-

The ability to restore the system to a previously archived operational state

Version control is critical for any kind of system with complex, changing content, especially when many people are working on that content. Version control provides the capability of seeing the exact sequence of a complex system’s evolution and isolating any particular moment in its history or providing detailed analysis on how two versions differ. With version control, we can understand what changed and when – which is essential to coping with complexity.

While version control was always deemed important for software artifacts, it has only recently become the preferred paradigm for managing infrastructure state as well. Because of this, version control is possibly the first IT management system you should acquire and implement (perhaps as a cloud service, such as Github, Gitlab, or Bitbucket).

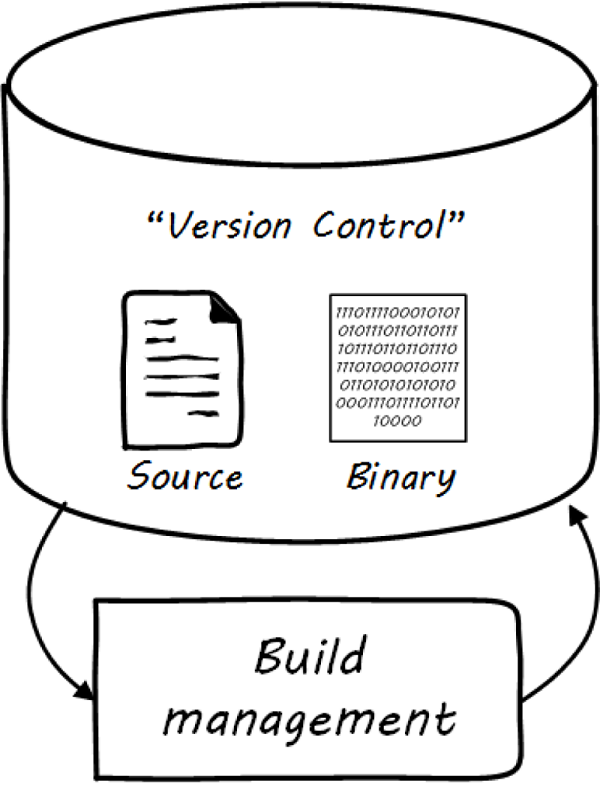

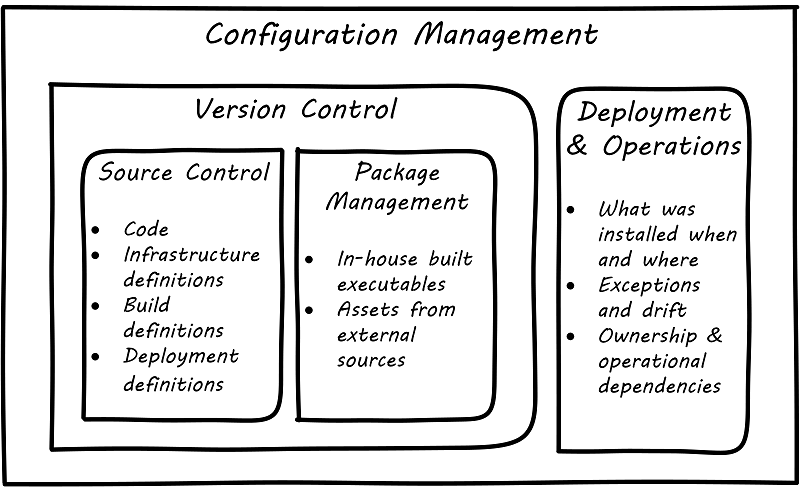

Version control in recent years increasingly distinguishes between source control and package management (see Types of Version Control and Configuration Management and its Components below): the management of binary files, as distinct from human-understandable symbolic files. It is also important to understand what versions are installed on what computers; this can be termed “deployment management”. (With the advent of containers, this is a particularly fast-changing area.)

Version control works like an advanced file system with a memory. (Actual file systems that do this are called versioning file systems.) It can remember all the changes you make to its contents, tell you the differences between any two versions, and also bring back the version you had at any point in time.

Survey research presented in the annual State of DevOps report indicates that version control is one of the most critical practices associated with high-performing IT organizations [44]. Forsgren [98] summarizes the practice of version control as:

-

Our application code is in a version control system

-

Our system configurations are in a version control system

-

Our application configurations are in a version control system

-

Our scripts for automating build and configuration are in a version control system

Source Control

Digital systems start with text files; e.g., those encoded in ASCII or Unicode. Text editors create source code, scripts, and configuration files. These will be transformed in defined ways (e.g., by compilers and build tools) but the human-understandable end of the process is mostly based on text files. In the previous section, we described a simple script that altered the state of a computer system. We care very much about when such a text file changes. One wrong character can completely alter the behavior of a large, complex system. Therefore, our configuration management approach must track to that level of detail.

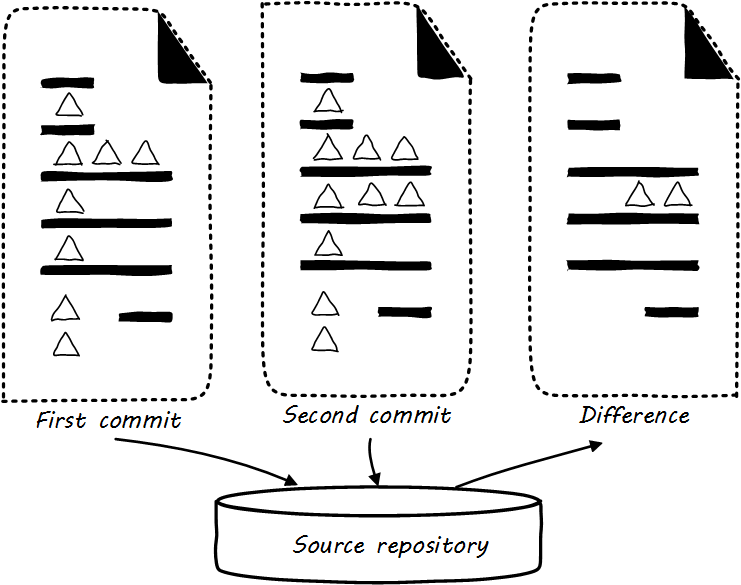

Source control is at its most powerful when dealing with textual data. It is less useful in dealing with binary data, such as image files. Text files can be analyzed for their differences in an easy to understand way (see Source Control). If “abc” is changed to “abd”, then it is clear that the third character has been changed from “c” to “d”. On the other hand, if we start with a digital image (e.g., a *.png file), alter one pixel, and compare the resulting before and after binary files in terms of their data, it would be more difficult to understand what had changed. We might be able to tell that they are two different files easily, but they would look very similar, and the difference in the binary data might be difficult to understand.

The “Commit” Concept

Although implementation details may differ, all version control systems have some concept of “commit”. As stated in Version Control with Git [181]:

In Git, a commit is used to record changes to a repository … Every Git commit represents a single, atomic changeset with respect to the previous state. Regardless of the number of directories, files, lines, or bytes that change with a commit … either all changes apply, or none do. [emphasis added]

The concept of a version or source control “commit” serves as a foundation for IT management and governance. It both represents the state of the computing system as well as providing evidence of the human activity affecting it. The “commit” identifier can be directly referenced by the build activity, which in turn is referenced by the release activity, which typically visible across the IT value chain.

Also, the concept of an atomic “commit” is essential to the concept of a “branch” — the creation of an experimental version, completely separate from the main version, so that various alterations can be tried without compromising the overall system stability. Starting at the point of a “commit”, the branched version also becomes evidence of human activity around a potential future for the system. In some environments, the branch is automatically created with the assignment of a requirement or story. In other environments, the very concept of branching is avoided. The human-understandable, contextual definitions of IT resources is sometimes called metadata.

Package Management

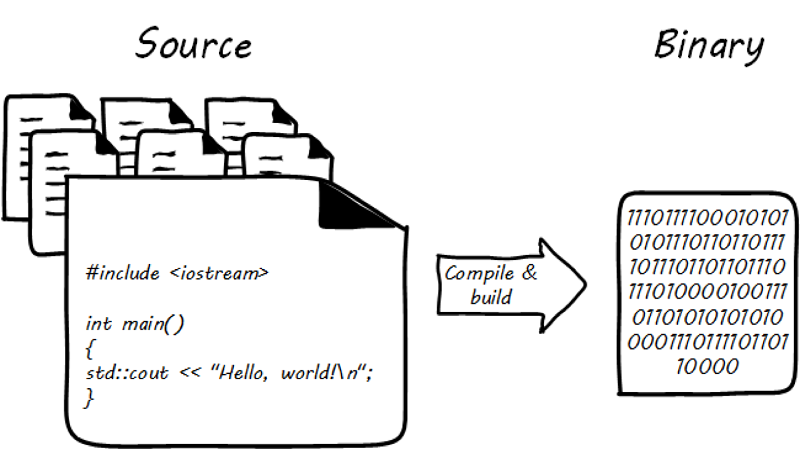

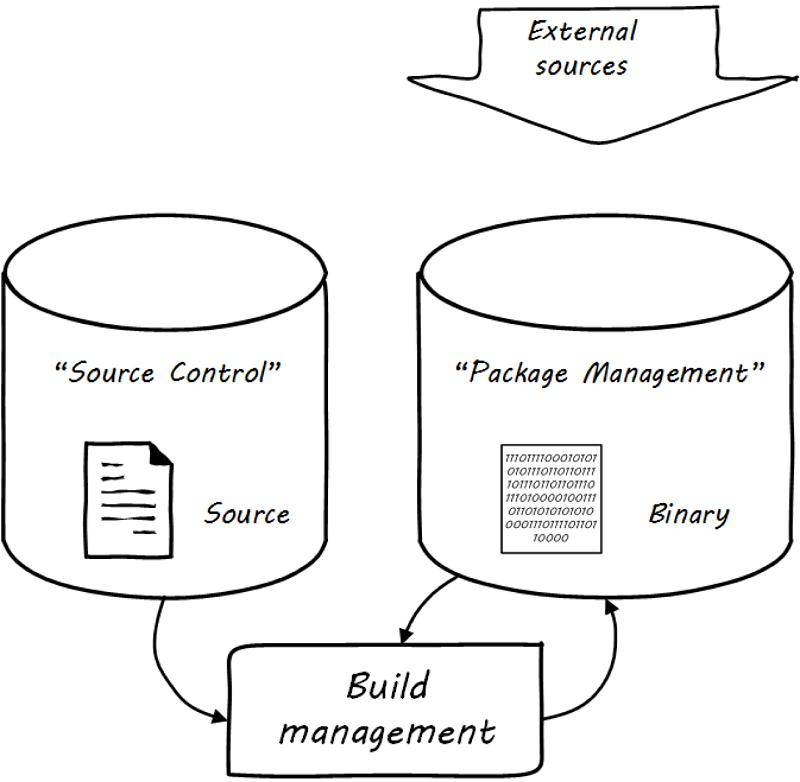

Much if not most software, once created as some kind of text-based artifact suitable for source control, must be compiled and further organized into deployable assets, often called “packages” (see Building Software).

In some organizations, it was once common for compiled binaries to be stored in the same repositories as source code (see Common Version Control). However, this is no longer considered a best practice. Source and package management are now viewed as two separate things (see Source versus Package Repos). Source repositories should be reserved for text-based artifacts whose differences can be made visible in a human-understandable way. Package repositories in contrast are for binary artifacts that can be deployed.

Package repositories also can serve as a proxy to the external world of downloadable software. That is, they are a cache, an intermediate store of the software provided by various external or “upstream” sources. For example, developers may be told to download the approved Ruby on Rails version from the local package repository, rather than going to get the latest version, which may not be suitable for the environment.

Package repositories furthermore are used to enable collaboration between teams working on large systems. Teams can check in their built components into the package repository for other teams to download. This is more efficient than everyone always building all parts of the application from the source repository.

The boundary between source and package is not hard and fast, however. We sometimes sees binary files in source repositories, such as images used in an application. Also, when interpreted languages (such as JavaScript™) are “packaged”, they still appear in the package as text files, perhaps compressed or otherwise incorporated into some larger containing structure.

While in earlier times, systems would be compiled for the target platform (e.g., compiled in a development environment, and then re-compiled for subsequent environments such as quality assurance and production) the trend today is decisively towards immutability. With the standardization brought by container-based architecture, current preference increasingly is to compile once into an immutable artifact that is deployed unchanged to all environments, with any necessary differences managed by environment-specific configuration such as source-managed text artifacts and shared secrets repositories.

Deployment Management

Version control is an important part of the overall concept of configuration management. But configuration management also covers the matter of how artifacts under version control are combined with other IT resources (such as virtual machines) to deliver services. Configuration Management and its Components elaborates on Types of Version Control to depict the relationships.

Resources in version control in general are not yet active in any value-adding sense. In order for them to deliver experiences, they must be combined with computing resources: servers (physical or virtual), storage, networking, and the rest, whether owned by the organization or leased as cloud services. The process of doing so is called deployment. Version control manages the state of the artifacts; meanwhile, deployment management (as another configuration management practice) manages the combination of those artifacts with the needed resources for value delivery.

Imperative and Declarative Approaches

Before we turned to source control, we looked at a simple script that changed the configuration of a computer. It did so in an imperative fashion. Imperative and declarative are two important terms from computer science.

In an imperative approach, one tells the computer specifically how we want to accomplish a task; e.g.:

-

Create a directory

-

Create some files

-

Create another directory

-

Create more files

Many traditional programming languages take an imperative approach. A script such as our iac.sh example is executed line by line; i.e., it is imperative.

In configuring infrastructure, scripting is in general considered “imperative”, but state-of-the-art infrastructure automation frameworks are built using a “declarative”, policy-based approach, in which the object is to define the desired end state of the resource, not the steps needed to get there. With such an approach, instead of defining a set of steps, we simply define the proper configuration as a target, saying (in essence) that “this computer should always have a directory structure thus; do what you need to do to make it so and keep it this way”.

Declarative approaches are used to ensure that the proper versions of software are always present on a system and that configurations such as Internet ports and security settings do not vary from the intended specification.

This is a complex topic, and there are advantages and disadvantages to each approach [47].

Evidence of Notability

Andrew Clay Shafer, credited as one of the originators of DevOps, stated: "In software development, version control is the foundation of every other Agile technical practice. Without version control, there is no build, no test-driven development, no continuous integration" [14 p. 99]. It is one of the four foundational areas of Agile, according to the Agile Alliance [10].

Limitations

Older platforms and approaches relied on direct command line intervention and (in the 1990s and 2000s) on GUI-based configuration tools. Organizations still relying on these approaches may struggle to adopt the principles discussed here.

Competency Category "Configuration Management and Infrastructure as Code" Example Competencies

-

Develop a simple Infrastructure as Code definition for a configured server

-

Demonstrate the ability to install, configure, and use a source control tool

-

Demonstrate the ability to install, configure, and use a package manager

-

Develop a complex Infrastructure as Code definition for a cluster of servers, optionally including load balancing and failover

Related Topics

Securing Infrastructure

| Security as an enterprise capability is covered in Governance, Risk, Security, and Compliance, as a form of applied risk management involving concepts of controls and assurance. But, securing infrastructure and applications must be a focus from the earliest stages of the digital product. |

This document recognizes the concept of securing infrastructure as critical to the practice of digital delivery:

-

Physical security

-

Networking issues

-

Core OS

-

Cloud issues

Description

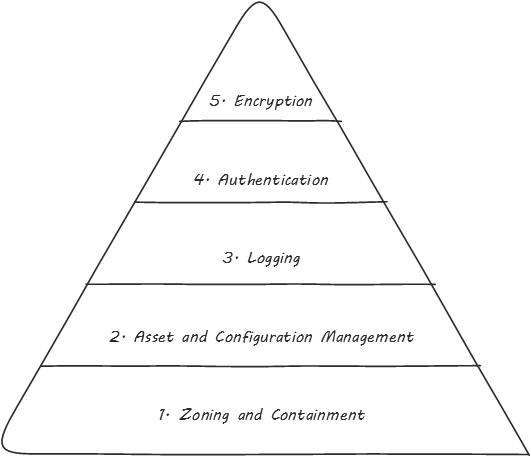

Infrastructure security, whether for on-premises computing or for cloud services, is first and foremost a security architecture issue. Many existing security control frameworks are available that describe various categories of controls which can be used to secure infrastructure. These include ISO/IEC 27002:2013, NIST 800-53, Security Services Control Catalog (jointly developed by The Open Group and The SABSA® Institute), and the Center for Internet Security Controls Version 7. These are comprehensive sets of security controls spanning many domains of security. While these control frameworks predate cloud computing, most of the control categories affecting infrastructure security apply in cloud services as well. In addition, security practitioners tasked with securing infrastructure may benefit from reference security architectures such as the Open Enterprise Security Architecture (O-ESA) from The Open Group, which describes basic approaches to securing enterprise networks, including infrastructure.

The diagram below [1] depicts some broad categories of security control types:

Common security practices

Since the advent of cloud computing, securing cloud infrastructure has been a key concern. Most of the security issues that exist in non-cloud environments exist in cloud services as well. In other words, access control, user authentication, vulnerability management, patching, securing network access, anti-malware capabilities, data loss prevention, encryption of data, and a host of other security controls that we deploy in on-premises computing require careful consideration in cloud services. The security concerns around cloud computing vary depending on whether the cloud service is SaaS, PaaS, or IaaS.

On premise versus Cloud security practices

There are also fundamental differences in security controls deployed in on-premises infrastructure (security controls may be physical or virtual), and those deployed in cloud infrastructure (which is purely virtual). These differences follow on from the shift brought by cloud computing. In on-premises computing, security architects and security solution providers had access to the physical computing networks, so physical security devices could be deployed in-line. The most common security design patterns leverage this physical access. In cloud services, there is no ability to insert security components which are physically in-line. This means that in cloud computing, we may have to utilize virtual security appliances, and virtual network segmentation solutions such as VLANs and Software-Defined Networks (SDNs) versus physical security approaches.

Another difference in securing physical versus cloud infrastructure arises in defining and implementing microsegmentation (small zones of access control). In physical networks, multiple hardware firewalls are required to achieve this. In cloud computing, VLANs and SDNs may be used to deliver equivalent capability, with some unique advantages (they are more manageable, at a lower capital expense).

In addition, the responsibility for securing cloud infrastructure varies considerably based upon the service model as well. While early focus on cloud security tended to focus on potential security concerns and gaps in security capabilities, the security community today generally acknowledges that while security concerns relating to cloud computing persist, there is also an opportunity for cloud services to “raise the bar”, improving upon baseline security for many customer organizations. Hybrid cloud computing combining public cloud services with private cloud infrastructure brings further complexity to infrastructure security.

Evidence of Notability

The need to secure computing infrastructure has been obvious and self-evident for decades, and has evolved alongside changes in popular computing paradigms, including the mainframe era, client/server computing, and now cloud computing. The need for specific, unique guidance relating to securing cloud services of various types emerged in 2009, when the Cloud Security Alliance (CSA) was first formed, and when they published Version 1 of their Security Guidance for Critical Areas of Focus in Cloud Computing. The CSA guidance is now on Version 4, and includes 14 different security domains.

Limitations

Organizations accustomed to deploying physical security capabilities on their own infrastructure may find it difficult to adapt to the challenges of securing cloud infrastructure in the various types cloud services. They may also have challenges adapting to the changes in responsibilities that are brought by the use of cloud services, where the Cloud Service Provider (CSP) is responsible for delivering many security capabilities, especially in SaaS services, and as a result the customer organization needs to specify needed security capabilities in Request for Proposals (RFPs). In addition, incident response management routines will require change.

Related Topics