Application Delivery

| Not all Digital Practitioners develop applications. As SaaS options expand, many practitioners will focus on acquiring, configuring, and operating them. However, the premise of this Competency Area is that all Digital Practitioners need to understand at least the basics of modern application delivery in order to effectively manage digital sourcing and operations. Understanding these basics will help the practitioner develop a sense of empathy for their vendors supplying digital services. |

Area Description

Based on the preceding competencies of digital value understanding and infrastructure, the practitioner can now start building.

IT systems that directly create value for non-technical users are usually called “applications”, or sometimes “services” or "service systems". As discussed in the Digital Fundamentals Competency Area, they enable value experiences in areas as diverse as consumer banking, entertainment and hospitality, and personal transportation. In fact, it is difficult to think of any aspect of modern life untouched by applications. (This overall trend is sometimes called Digital Transformation [298].)

Applications are built from software, the development of which is a core concern for any IT-centric product strategy. Software development is a well-established career, and a fast-moving field with new technologies, frameworks, and schools of thought emerging weekly, it seems. This Competency Area will cover applications and the software lifecycle, from requirements through construction, testing, building, and deployment of modern production environments. It also discusses earlier approaches to software development, the rise of the Agile movement, and its current manifestation in the practice of DevOps.

This document uses an engineering definition of “application”. To an electrical engineer, a toaster or a light bulb is an “application” of electricity (hence the term “appliance”). Similarly, a Customer Relationship Management (CRM) system, or a web video on-demand service, are “applications” of the digital infrastructure covered previously.

Without applications, computers would be merely a curiosity. Electronic computers were first “applied” to military needs for codebreaking and artillery calculations. After World War II, ex-military officers like Edmund Berkeley at Prudential realized computers' potential if “applied” to problems like insurance record-keeping [11]. At first, such systems required actual manual configuration or painstaking programming in complex, tedious, and unforgiving low-level programming languages. As the value of computers became obvious, investment was made in making programming easier through more powerful languages.

The history of software is well documented. Low-level languages (binary and assembler) were increasingly replaced by higher-level languages such as FORTRAN, COBOL, and C. Proprietary machine/language combinations were replaced by open standards and compilers that could take one kind of source code and build it for different hardware platforms. Many languages followed, such as Java, Visual Basic, and JavaScript. Sophisticated middleware was developed to enable ease of programming, communication across networks, and standardization of common functions.

Today, much development uses frameworks like Apache Struts, Spring, and Ruby on Rails, along with interpreted languages that take much of the friction out of building and testing code. But even today, the objective remains to create a binary executable file or files that computer hardware can “execute”; that is, turn into a computing-based value experience, mediated through devices such as workstations, laptops, smartphones, and their constituent components.

In the first decades of computing, any significant application of computing power to a new problem typically required its own infrastructure, often designed specifically for the problem. While awareness existed that computers, in theory, could be “general-purpose”, in practice, this was not so easy. Military/aerospace needs differed from corporate information systems, which differed from scientific and technical uses. And major new applications required new compute capacity.

The software and hardware needed to be specified in keeping with requirements, and acquiring it took lengthy negotiations and logistics and installation processes. Such a project from inception to production might take nine months (on the short side) to 18 or more months.

Hardware was dedicated and rarely re-used. Servers compatible with one system might have few other applications if they became surplus. In essence, this sort of effort had a strong component of systems engineering, as designing and optimizing the hardware component was a significant portion of the work.

Today, matters are quite different, and yet echoes of the older model persist. As mentioned, any compute workloads are going to incur economic cost. However, capacity is being used more efficiently and can be provisioned on-demand. Currently, it is a significant application indeed that merits its own systems engineering.

| To “provision” in an IT sense means to make the needed resources or services available for a particular purpose or consumer. |

Instead, a variety of mechanisms (as covered in the previous discussion of cloud systems) enable the sharing of compute capacity, the raw material of application development. The fungibility and agility of these mechanisms increase the velocity of creation and evolution of application software. For small and medium-sized applications, the overwhelming trend is to virtualize and run on commodity hardware and OSs. Even 15 years ago, non-trivial websites with database integration would be hosted by internal PaaS clusters at major enterprises (for example, Microsoft® ASP, COM+, and SQL server clusters could be managed as multi-tenant).

The general-purpose capabilities of virtualized public and private cloud today are robust. Assuming the organization has the financial capability to purchase computing capacity in anticipation of use, it can be instantly available when the need surfaces. Systems engineering at the hardware level is more and more independent of the application lifecycle; the trend is towards providing compute as a service, carefully specified in terms of performance, but not particular hardware.

Hardware physically dedicated to a single application is rarer, and even the largest engineered systems are more standardized so that they may one day benefit from cloud approaches. Application architectures have also become much more powerful. Interfaces (interaction points for applications to exchange information with each other, generally in an automated way) are increasingly standardized. Applications are designed to scale dynamically with the workload and are more stable and reliable than in years past.

Application Basics

Description

This section discusses the generally understood phases or stages of application development. With current trends towards Agile development, it is critical to understand that these phases are not intended as a prescriptive plan, nor is there any discussion of how long each should last. It is possible to spend months at a time on each phase, and it is possible to perform each phase in the course of a day. However, there remains a rough ordering of:

-

Understanding intended outcome

-

Analyzing and designing the "solution" that can support the outcome

-

Building the solution

-

Evaluating whether the solution supports the intended outcome (usually termed "testing")

-

Delivering or transitioning the solution into a state where it is delivering the intended outcome

This set of activities is sometimes called the "Software Development Lifecycle" (SDLC). These activities are supported by increasingly automated approaches which are documented in succeeding sections.

Documenting System Intent

The application or digital product development process starts with a concept of intended outcome.

In order to design and build a digital product, the Digital Practitioner needs to express what theory needs the product to do. The conceptual tool used to do this has historically been termed the Requirement. The literal word “Requirement” has fallen out of favor with the rise of Agile [217], and has a number of synonyms and variations:

-

Use-case

-

User story

-

Non-functional requirement

-

Epic

-

Architectural epic

-

Architectural requirement

While these may differ in terms of focus and scope, the basic concept is the same — the requirement, however named, expresses some outcome, intent, or constraint the system must fulfill. This intent calls for work to be performed.

Requirements management is classically taught using the "shall" format. For example, the system shall provide …, the system shall be capable of …, etc.

More recently, Agile-aligned teams sometimes prefer user story mapping [217]. Here is an example from [68]:

“As a shopper, I can select how I want items shipped based on the actual costs of shipping to my address so that I can make the best decision.”

The basic format is:

As a <type of user>, I want <goal>, so that <some value>.

The story concept is flexible and can be aggregated and decomposed in various ways, as we will discuss in Product Management. Our interest here is in the basic stimulus for application development work that it represents.

Analysis and Design

The analysis and design of software-based systems itself employs a variety of techniques. Starting from the documented system intent, in general, the thought process will seek to answer questions such as:

-

Is it possible to support the intended outcome with a digital system?

-

What are the major data concepts and processing activities the proposed digital system will need to support?

-

What are the general attributes or major classifications of such a potential solution? Will it be a transactional system, an analytic system?

-

How do these major concepts decompose into finer-grained concepts, and how are these finer-grained concepts translated into executable artifacts such as source code and computable data structures?

A variety of tools and approaches may be used in analysis and design. Sometimes, the analysis and design is entirely internal to the person building the system. Sometimes, it may be sketched on paper or a whiteboard. There are a wide variety of more formalized approaches (process models, data models, systems models) used as these systems and organizations scale up; these will be discussed in future Competency Areas.

Construction

When an apparently feasible approach is determined, construction may commence. How formalized "apparently feasible" is depends greatly on the organization and scale of the system. "You start coding and I’ll go find out what the users want" is an old joke in IT development. It represents a long-standing pair of questions: Are we ready to start building? Are we engaged in excessive analysis - sometimes called "analysis paralysis"? Actually writing source code and executing it, preferably with knowledgeable stakeholders evaluating the results, provides unambiguous confirmation of whether a given approach is feasible.

Actual construction techniques will typically center around the creation of text files in specialized computing languages such as C++, Javascript, Java®, Ruby on Rails, or Python. These languages are the fundamental mechanisms for accessing the core digital infrastructure services of compute, transmission, and storage discussed previously. There is a vast variety of instructional material available on the syntax and appropriate techniques for using such languages.

Testing

Evaluating whether a developed system fulfills the intended outcome is generally called testing. There is a wide variety of testing types, such as:

-

Functional testing (does the system, or specific component of it, deliver the intended outcomes as specified in requirements?)

-

Integration testing (if the system is modularized, can modules interoperate as needed to fulfill the intended outcomes?)

-

Usability testing (can operators navigate the system intuitively, given training that makes economic sense? are there risks of operator error presented by system design choices?)

-

Performance testing (does the system scale to necessary volumes and speeds?)

-

Security testing (does the system resist unauthorized attempts to access or change it?)

Although testing is logically distinct from construction, in modern practices they are tightly integrated and automated, as will be discussed below.

Delivery

Finally, the system completes construction and testing activities - it must be made available (delivered or transitioned) into a state where it can fulfill its intended outcomes. This is sometimes called the state of "production", discussed below. Delivery may take two forms:

-

Moving installable "packages" of software to a location where users can install them directly on devices of their choice; this includes delivery media such as DVDs as well as network-accessible locations

-

Installing the software so that its benefits - its intended outcomes - are available "as a service" via networks; outcomes may be delivered via the interface of an application or "app" on a mobile phone or personal computer, a web page, an Application Programming Interface (API), or other behavior of devices responding to the programmed application (e.g., IoT)

Delivery is increasingly automated, as will be covered in the section on DevOps technical practices.

Evidence of Notability

The basic concepts of the "software lifecycle" as expressed here are broadly discussed in software engineering; e.g., [140, 276, 266].

Limitations

Application construction, including programming source code, is not necessary (in general) when consuming SaaS. Many companies prefer to avoid development as much as possible, relying on commercially available services. Such companies still may be pursuing a digital strategy in important regards.

Related Topics

Agile Software Development

Description

Waterfall Development

When a new analyst would join a large systems integrator Andersen Consulting (now Accenture) in 1998, they would be schooled in something called the Business Integration Method (BIM). The BIM was a classic expression of what is called “waterfall development".

What is waterfall development? It is a controversial question. Walker Royce, the original theorist who coined the term named it in order to critique it [241]. Military contracting and management consultancy practices, however, embraced it, as it provided an illusion of certainty. The fact that computer systems until recently included a substantial component of hardware systems engineering may also have contributed.

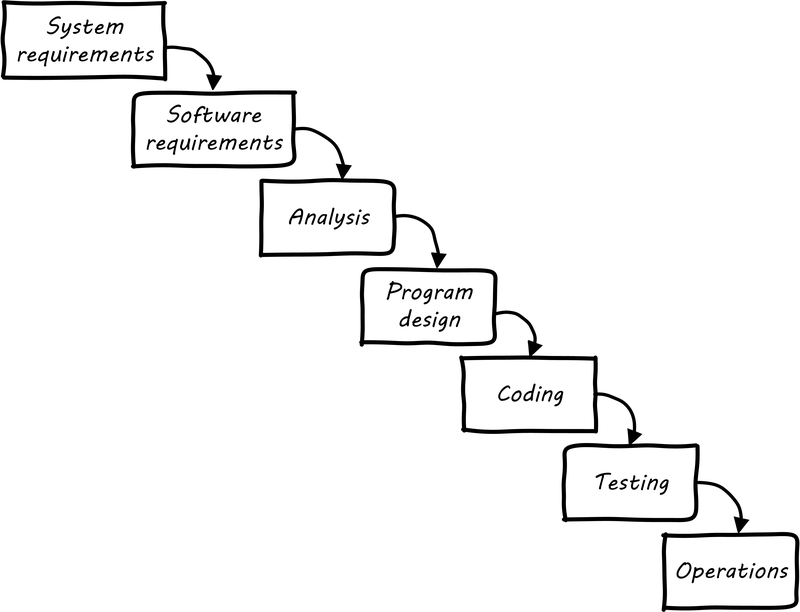

Waterfall development as a term has become associated with a number of practices. The original illustration was similar to Waterfall Lifecycle (similar to [241]):

First, requirements need to be extensively captured and analyzed before the work of development can commence. So, the project team would develop enormous spreadsheets of requirements, spending weeks on making sure that they represented what “the customer” wanted. The objective was to get the customer’s signature. Any further alterations could be profitably billed as “change requests”.

The analysis phase was used to develop a more structured understanding of the requirements; e.g., conceptual and logical data models, process models, business rules, and so forth.

In the design phase, the actual technical platforms would be chosen; major subsystems determined with their connection points, initial capacity analysis (volumetrics) translated into system sizing, and so forth. (Perhaps hardware would not be ordered until this point, leading to issues with developers now being “ready”, but hardware not being available for weeks or months yet.)

Only after extensive requirements, analysis, and design would coding take place (implementation). Furthermore, there was a separation of duties between developers and testers. Developers would write code and testers would try to break it, filing bug reports to which the developers would then need to respond.

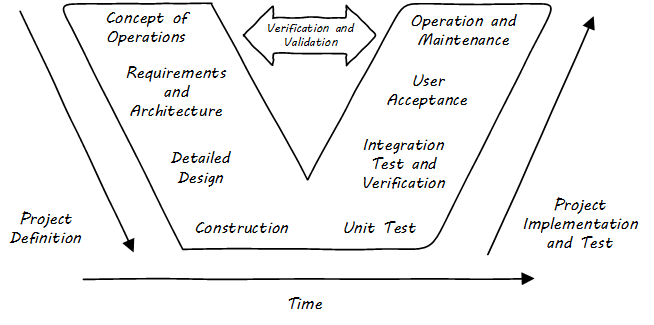

Another model sometimes encountered at this time was the V-model (see V-Model[1]). This was intended to better represent the various levels of abstraction operating in the systems delivery activity. Requirements operate at various levels, from high-level business intent through detailed specifications. It is all too possible that a system is “successfully” implemented at lower levels of specification, but fails to satisfy the original higher-level intent.

The failures of these approaches at scale are by now well known. Large distributed teams would wrestle with thousands of requirements. The customer would “sign off” on multiple large binders, with widely varying degrees of understanding of what they were agreeing to. Documentation became an end in itself and did not meet its objectives of ensuring continuity if staff turned over. The development team would design and build extensive product implementations without checking the results with customers. They would also defer testing that various component parts would effectively interoperate until the very end of the project, when the time came to assemble the whole system.

Failure after failure of this approach is apparent in the historical record [111]. Recognition of such failures, dating from the 1960s, led to the perception of a “software crisis”.

However, many large systems were effectively constructed and operated during the “waterfall years", and there are reasonable criticisms of the concept of a “software crisis” [39].

Successful development efforts existed back to the earliest days of computing (otherwise, there probably wouldn’t be computers, or at least not so many). Many of these successful efforts used prototypes and other means of building understanding and proving out approaches. But highly publicized failures continued, and a substantial movement against “waterfall” development started to take shape.

Origins and Practices of Agile Development

By the 1990s, a number of thought leaders in software development had noticed some common themes with what seemed to work and what didn’t. Kent Beck developed a methodology known as “eXtreme Programming” (XP) [24]. XP pioneered the concepts of iterative, fast-cycle development with ongoing stakeholder feedback, coupled with test-driven development, ongoing refactoring, pair programming, and other practices. (More on the specifics of these in the next section.)

Various authors assembled in 2001 and developed the Agile Manifesto [8], which further emphasized an emergent set of values and practices:

The Manifesto authors further stated:

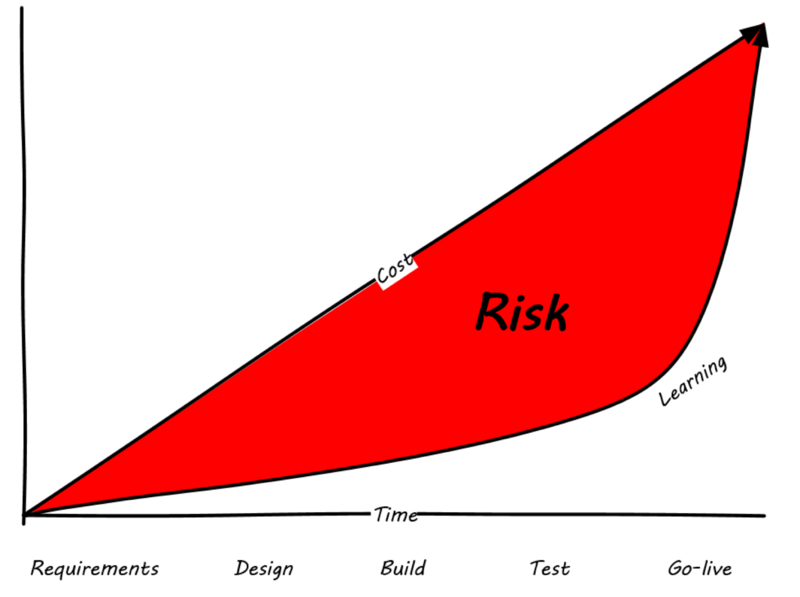

Agile methodologists emphasize that software development is a learning process. In general, learning (and the value derived from it) is not complete until the system is functioning to some degree of capability. As such, methods that postpone the actual, integrated verification of the system increase risk. Alistair Cockburn visualizes risk as the gap between the ongoing expenditure of funds and the lag in demonstrating valuable learning (see Waterfall Risk, similar to [66]).

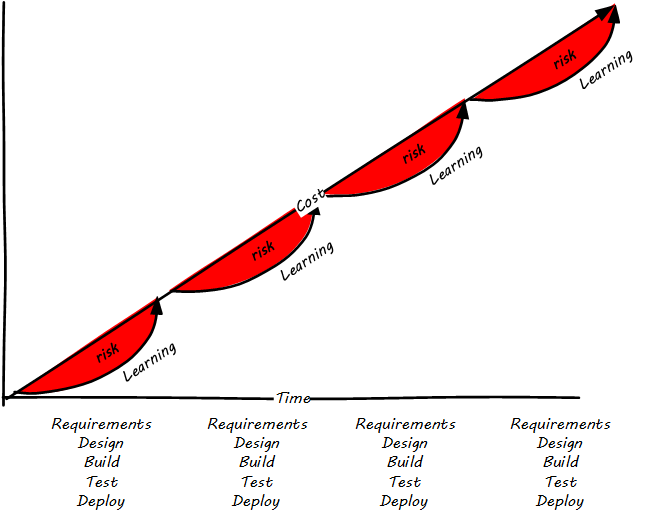

Because Agile approaches emphasize delivering smaller batches of complete functionality, this risk gap is minimized (see Agile Risk, similar to [66]).

The Agile models for developing software aligned with the rise of cloud and web-scale IT. As new customer-facing sites like Flickr®, Amazon, Netflix, Etsy®, and Facebook scaled to massive proportions, it became increasingly clear that waterfall approaches were incompatible with their needs. Because these systems were directly user-facing, delivering monetized value in fast-moving competitive marketplaces, they required a degree of responsiveness previously not seen in “back-office” IT or military-aerospace domains (the major forms that large-scale system development had taken to date). We will talk more of product-centricity and the overall DevOps movement in the next section.

This new world did not think in terms of large requirements specifications. Capturing a requirement, analyzing and designing to it, implementing it, testing that implementation, and deploying the result to the end user for feedback became something that needed to happen at speed, with high repeatability. Requirements “backlogs” were (and are) never “done”, and increasingly were the subject of ongoing re-prioritization, without high-overhead project “change” barriers.

These user-facing, web-based systems integrate the SDLC tightly with operational concerns. The sheer size and complexity of these systems required much more incremental and iterative approaches to delivery, as the system can never be taken offline for the “next major release” to be installed. New functionality is moved rapidly in small chunks into a user-facing, operational status, as opposed to previous models where vendors would develop software on an annual or longer version cycle, to be packaged onto media for resale to distant customers.

Contract software development never gained favor in the Silicon Valley web-scale community; developers and operators are typically part of the same economic organization. So, it was possible to start breaking down the walls between “development” and “operations”, and that is just what happened.

Large-scale systems are complex and unpredictable. New features are never fully understood until they are deployed at scale to the real end user base. Therefore, large-scale web properties also started to “test in production” (more on this in the Operations Competency Area) in the sense that they would deploy new functionality to only some of their users. Rather than trying to increase testing to understand things before deployment better, these new firms accepted a seemingly higher-level of risk in exposing new functionality sooner. (Part of their belief is that it actually is lower risk because the impacts are never fully understood in any event.)

Evidence of Notability

See [174] for a thorough history of Agile and its antecedents. Agile is recognized as notable in leading industry and academic guidance [276, 140] and has a large, active, and highly visible community (see http://www.agilealliance.org). It is increasingly influential on non-software activities as well [234, 233].

Limitations

Agile development is not as relevant when packaged software is acquired. Such software has a more repeatable pattern of implementation, and more up-front planning may be appropriate.

Related Topics

DevOps Technical Practices

Description

Consider this inquiry by Mary and Tom Poppendieck:

The implicit goal is that the organization should be able to change and deploy one line of code, from idea to production in under an hour, and in fact, might want to do so on an ongoing basis. There is deep Lean/Agile theory behind this objective; a theory developed in reaction to the pattern of massive software failures that characterized IT in the first 50 years of its existence. (This document discusses systems theory, including the concept of feedback, in Context II and other aspects of Agile theory, including the ideas of Lean Product Development, in Contexts II and III.)

Achieving this goal is feasible but requires new approaches. Various practitioners have explored this problem, with great success. Key initial milestones included:

-

The establishment of “test-driven development” as a key best practice in creating software [24]

-

Duvall’s book Continuous Integration [92]

-

Allspaw & Hammonds’s seminal “10 Deploys a Day” presentation describing technical practices at Flickr [13]

-

Humble & Farley’s Continuous Delivery [136]

-

The publication of The Phoenix Project [165]

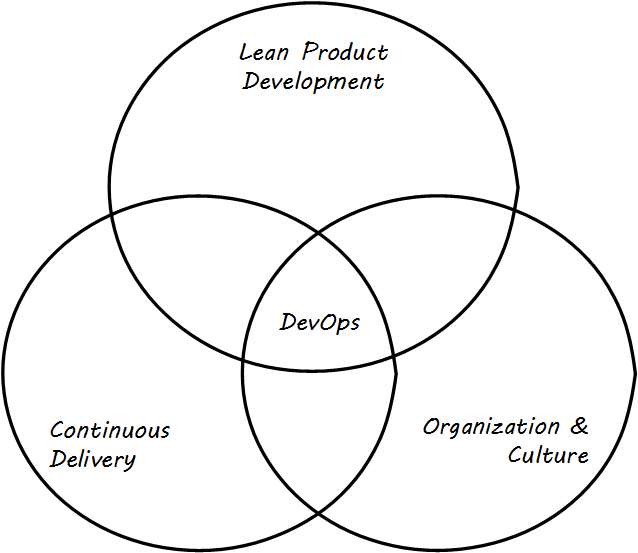

Defining DevOps

“DevOps” is a broad term, encompassing product management, continuous delivery, organization structure, team behaviors, and culture (see DevOps Definition). Some of these topics will not be covered until Contexts II and III in this document. At an execution level, the fundamental goal of moving smaller changes more quickly through the pipeline is a common theme. Other guiding principles include: “If it hurts, do it more frequently”. (This is in part a response to the poor practice, or antipattern, of deferring integration testing and deployment until those tasks are so big as to be unmanageable.) There is a great deal written on the topic of DevOps currently; the Humble/Farley book is recommended as an introduction. Let’s go into a little detail on some essential Agile/DevOps practices:

-

Test-driven development

-

Ongoing refactoring

-

Continuous integration

-

Continuous deployment

Continuous Delivery Pipeline

The infrastructure Competency Area suggests that the Digital Practitioner may need to select:

-

Development stack (language, framework, and associated enablers such as database and application server)

-

Cloud provider that supports the chosen stack

-

Version control

-

Deployment capability

The assumption is that the Digital Practitioner is going to start immediately with a continuous delivery pipeline.

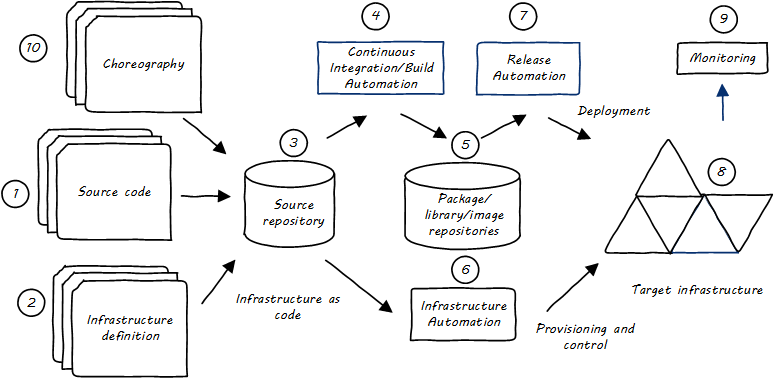

What is meant by a continuous delivery pipeline? A Simple Continuous Delivery Toolchain presents a simplified, starting overview.

First, some potential for value is identified. It is refined through product management techniques into a feature — some specific set of functionality that when complete will enable the value proposition (i.e., as a moment of truth).

-

The feature is expressed as some set of IT work, today usually in small increments lasting between one and four weeks (this of course varies). Software development commences; e.g., the creation of Java components by developers who first write tests, and then write code that satisfies the test.

-

More or less simultaneously, the infrastructure configuration is also refined, also "as-code".

-

The source repository contains both functional and infrastructure artifacts (text-based).

-

When the repository detects the new “check-in”, it contacts the build choreography manager, which launches a dedicated environment to build and test the new code. The environment is configured using “Infrastructure as Code” techniques; in this way, it can be created automatically and quickly.

-

If the code passes all tests, the compiled and built binary executables may then be “checked in” to a package management repository.

-

Infrastructure choreography may be invoked at various points to provision and manage compute, storage, and networking resources (on-premise or cloud-based).

-

Release automation deploys immutable binary packages to target infrastructure.

-

Examples of such infrastructure may include quality assurance, user acceptance, and production environments.

-

The production system is monitored for availability and performance.

-

An emerging practice is to manage the end-to-end flow of all of the above activities as "choreography", providing comprehensive traceability of configuration and deployment activities across the pipeline.

Test Automation and Test-Driven Development

Testing software and systems is a critically important part of digital product development. The earliest concepts of waterfall development called for it explicitly, and “software tester” as a role and “software quality assurance” as a practice have long histories. Evolutionary approaches to software have a potential major issue with software testing:

As a consequence of the introduction of new bugs, program maintenance requires far more system testing per statement written than any other programming. Theoretically, after each fix one must run the entire bank of test cases previously run against the system, to ensure that it has not been damaged in an obscure way. In practice, such regression testing must indeed approximate this theoretical ideal, and it is very costly.

Mythical Man-Month

This issue was and is well known to thought leaders in Agile software development. The key response has been the concept of automated testing so that any change in the software can be immediately validated before more development along those lines continues. One pioneering tool was JUnit:

The reason JUnit is important … is that the presence of this tiny tool has been essential to a fundamental shift for many programmers. A shift where testing has moved to a front and central part of programming. People have advocated it before, but JUnit made it happen more than anything else.

http://martinfowler.com/books/meszaros.html

From the reality that regression testing was “very costly” (as stated by Brooks in the above quote), the emergence of tools like JUnit (coupled with increasing computer power and availability) changed the face of software development, allowing the ongoing evolution of software systems in ways not previously possible.

In test-driven development, the idea essence is to write code that tests itself, and in fact to write the test before writing any code. This is done through the creation of test harnesses and the tight association of tests with requirements. The logical culmination of test-driven development was expressed by Kent Beck in eXtreme Programming: write the test first [24]. Thus:

-

Given a “user story” (i.e., system intent), figure out a test that will demonstrate its successful implementation

-

Write this test using the established testing framework

-

Write the code that fulfills the test

Refactoring and technical debt

Test-driven development enables the next major practice, that of refactoring. Refactoring is how technical debt is addressed. What is technical debt? Technical debt is a term coined by Ward Cunningham and is now defined by Wikipedia as:

… the eventual consequences of poor system design, software architecture, or software development within a codebase. The debt can be thought of as work that needs to be done before a particular job can be considered complete or proper. If the debt is not repaid, then it will keep on accumulating interest, making it hard to implement changes later on … Analogous to monetary debt, technical debt is not necessarily a bad thing, and sometimes technical debt is required to move projects forward. [303]

Test-driven development ensures that the system’s functionality remains consistent, while refactoring provides a means to address technical debt as part of ongoing development activities. Prioritizing the relative investment of repaying technical debt versus developing new functionality will be examined in future sections.

Technical debt is covered further in here.

Continuous Integration

As systems engineering approaches transform to cloud and Infrastructure as Code, a large and increasing percentage of IT work takes the form of altering text files and tracking their versions. This was covered in the discussion of configuration management with artifacts such as scripts being created to drive the provisioning and configuring of computing resources. Approaches which encourage ongoing development and evolution are increasingly recognized as less risky since systems do not respond well to big “batches” of change. An important concept is that of “continuous integration”, popularized by Paul Duvall in his book of the same name [92].

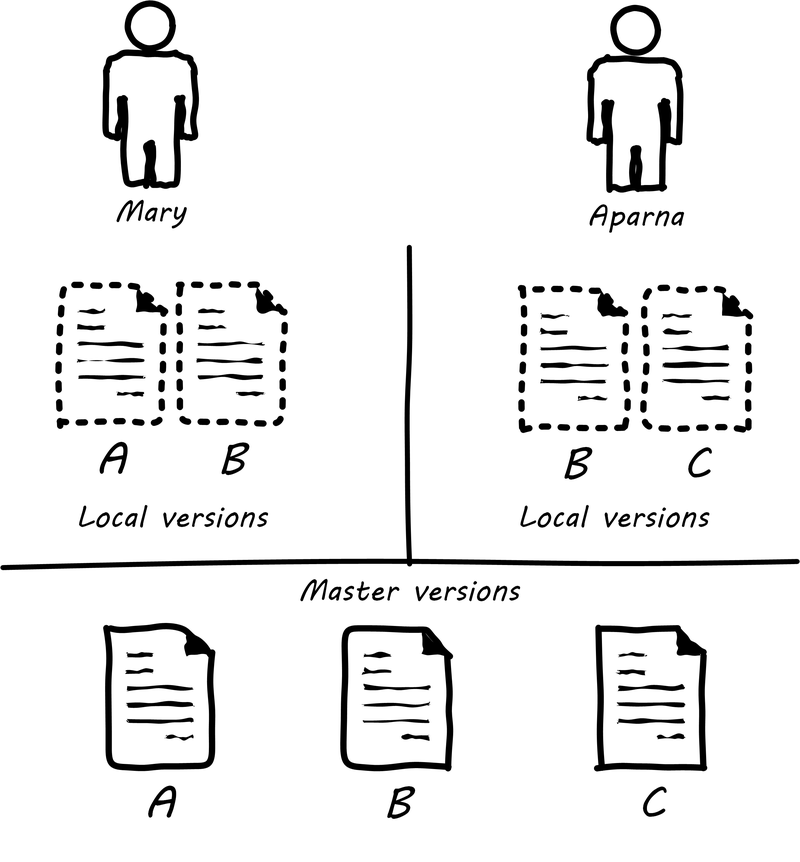

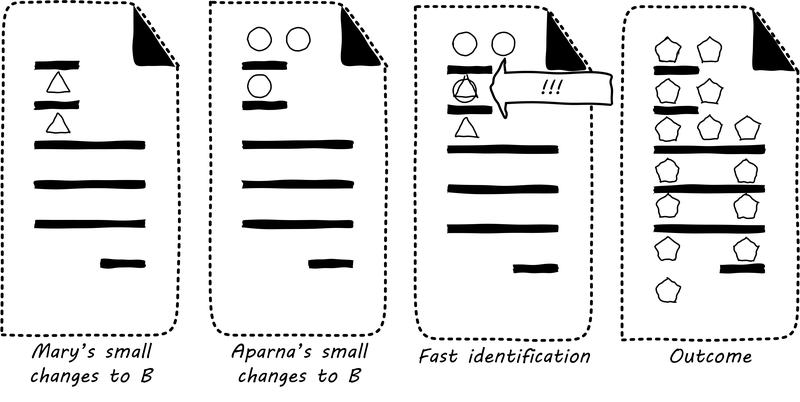

In order to understand why continuous integration is important, it is necessary to discuss further the concept of source control and how it is employed in real-world settings. Imagine Mary has been working for some time with her partner Aparna in their startup (or on a small team) and they have three code modules (see File B being Worked on by Two People). Mary is writing the web front end (file A), Aparna is writing the administrative tools and reporting (file C), and they both partner on the data access layer (file B). The conflict, of course, arises on file B that they both need to work on. A and C are mostly independent of each other, but changes to any part of B can have an impact on both their modules.

If changes are frequently needed to B, and yet they cannot split it into logically separate modules, they have a problem; they cannot both work on the same file at the same time. They are each concerned that the other does not introduce changes into B that “break” the code in their own modules A and C.

In smaller environments, or under older practices, perhaps there is no conflict, or perhaps they can agree to take turns. But even if they are taking turns, Mary still needs to test her code in A to make sure it has not been broken by changes Aparna made in B. And what if they really both need to work on B (see File B being Worked on by Two People) at the same time?

Given that they have version control in place, each of them works on a “local” copy of the file (see illustration “File B being worked on by two people”).

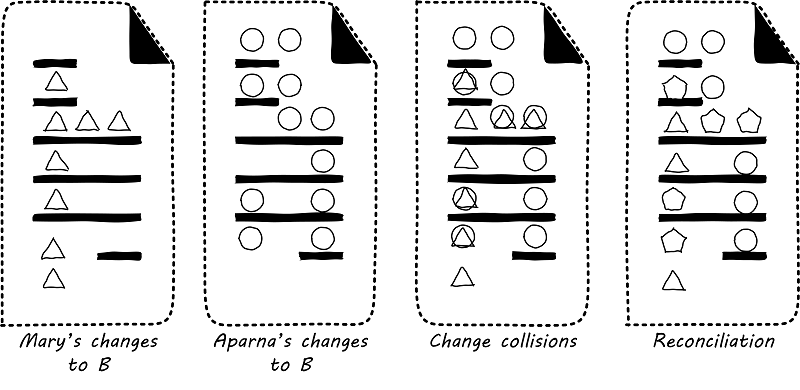

That way, they can move ahead on their local workstations. But when the time comes to combine both of their work, they may find themselves in “merge hell”. They may have chosen very different approaches to solving the same problem, and code may need massive revision to settle on one codebase. For example, in the accompanying illustration, Mary’s changes to B are represented by triangles and Aparna’s are represented by circles. They each had a local version on their workstation for far too long, without talking to each other.

The diagrams represent the changes graphically; of course, with real code, the different graphics represent different development approaches each person took. For example, Mary had certain needs for how errors were handled, while Aparna had different needs.

In Merge Hell, where triangles and circles overlap, Mary and Aparna painstakingly have to go through and put in a consolidated error handling approach, so that the code supports both of their needs. The problem, of course, is there are now three ways errors are being handled in the code. This is not good, but they did not have time to go back and fix all the cases. This is a classic example of technical debt.

Suppose instead that they had been checking in every day. They can identify the first collision quickly (see Catching Errors Quickly is Valuable), and have a conversation about what the best error handling approach is. This saves them both the rework of fixing the collisions, and the technical debt they might have otherwise accepted:

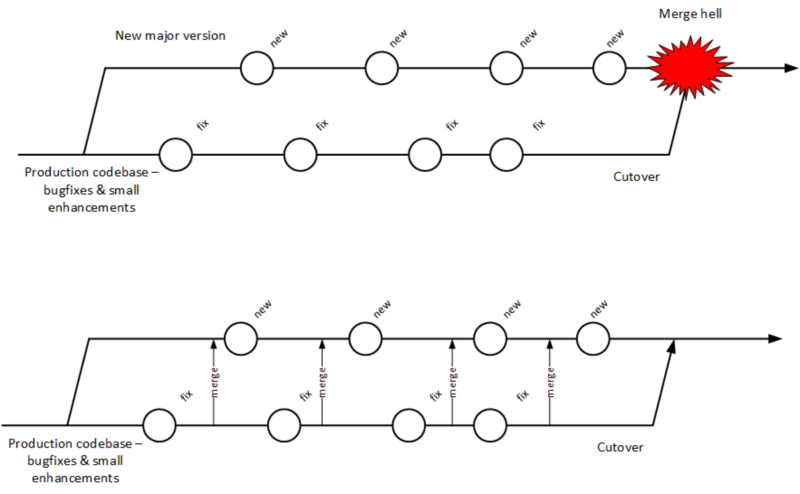

These problems have driven the evolution of software configuration management for decades. In previous methods, to develop a new release, the code would be copied into a very long-lived “branch” (a version of the code to receive independent enhancement). Ongoing “maintenance” fixes of the existing codebase would also continue, and the two codebases would inevitably diverge. Switching over to the “new” codebase might mean that once-fixed bugs (bugs that had been addressed by maintenance activities) would show up again, and, logically, this would not be acceptable. So, when the newer development was complete, it would need to be merged back into the older line of code, and this was rarely if ever easy (again, “merge hell”). In a worst-case scenario, the new development might have to be redone.

Enter continuous integration (see Big Bang versus Continuous Integration). As presented in [92] the key practices (note similarities to the pipeline discussion) include:

-

Developers run private builds including their automated tests before committing to source control

-

Developers check in to source control at least daily

-

Distributed version control systems such as git are especially popular, although older centralized products are starting to adopt some of their functionality

-

Integration builds happen several times a day or more on a separate, dedicated machine

-

-

100% of tests must pass for each build, and fixing failed builds is the highest priority

-

A package or similar executable artifact is produced for functional testing

-

A defined package repository exists as a definitive location for the build output

Rather than locking a file so that only one person can work on it at a time, it has been found that the best approach is to allow developers to actually make multiple copies of such a file or file set and work on them simultaneously.

This is the principle of continuous integration at work. If the developers are continually pulling each other’s work into their own working copies, and continually testing that nothing has broken, then distributed development can take place. So, for a developer, the day’s work might be as follows:

8 AM: check out files from master source repository to a local branch on the workstation. Because files are not committed unless they pass all tests, the code is clean. The developer selects or is assigned a user story (requirement) that they will now develop.

8:30 AM: The developer defines a test and starts developing the code to fulfill it.

10 AM: The developer is close to wrapping up the first requirement. They check the source repository. Their partner has checked in some new code, so they pull it down to their local repository. They run all the automated tests and nothing breaks, so all is good.

10:30 AM: They complete their first update of the day; it passes all tests on the local workstation. They commit it to the master repository. The master repository is continually monitored by the build server, which takes the code created and deploys it, along with all necessary configurations, to a dedicated build server (which might be just a virtual machine or transient container). All tests pass there (the test defined as indicating success for the module, as well as a host of older tests that are routinely run whenever the code is updated).

11:00 AM: Their partner pulls these changes into their working directory. Unfortunately, some changes made conflict with some work the partner is doing. They briefly consult and figure out a mutually-acceptable approach.

Controlling simultaneous changes to a common file is only one benefit of continuous integration. When software is developed by teams, even if each team has its own artifacts, the system often fails to “come together” for higher-order testing to confirm that all the parts are working correctly together. Discrepancies are often found in the interfaces between components; when component A calls component B, it may receive output it did not expect and processing halts. Continuous integration ensures that such issues are caught early.

Continuous Integration Choreography

DevOps and continuous delivery call for automating everything that can be automated. This goal led to the creation of continuous integration managers such as Hudson, Jenkins, Travis CI, and Bamboo. Build managers may control any or all of the following steps:

-

Detecting changes in version control repositories and building software in response

-

Alternately, building software on a fixed (e.g., nightly) schedule

-

Compiling source code and linking it to libraries

-

Executing automated tests

-

Combining compiled artifacts with other resources into installable packages

-

Registering new and updated packages in the package management repository, for deployment into downstream environments

-

In some cases, driving deployment into downstream environments, including production (this can be done directly by the build manager, or through the build manager sending a message to a deployment management tool)

Build managers play a critical, central role in the modern, automated pipeline and will likely be a center of attention for the new Digital Practitioner in their career.

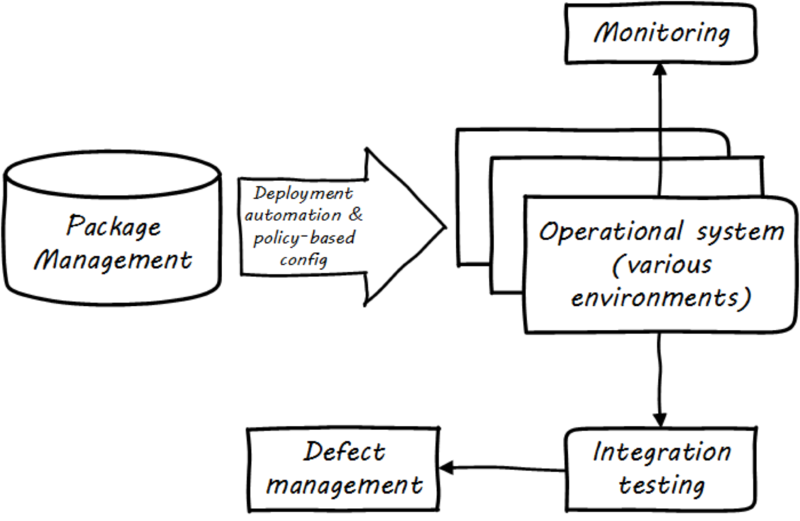

Continuous Delivery

Once the software is compiled and built, the executable files that can be installed and run operationally should be checked into a package manager. At that point, the last mile steps can be taken, and the now tested and built software can be deployed to pre-production or production environments (see Deployment). The software can undergo usability testing, load testing, integration testing, and so forth. Once those tests are passed, it can be deployed to production.

Moving new code into production has always been a risky procedure. Changing a running system always entails some uncertainty. However, the practice of Infrastructure as Code coupled with increased virtualization has reduced the risk. Often, a rolling release strategy is employed so that code is deployed to small sets of servers while other servers continue to service the load. This requires careful design to allow the new and old code to co-exist at least for a brief time.

This is important so that the versions of software used in production are well controlled and consistent. The package manager can then be associated with some kind of deploy tool that keeps track of what versions are associated with which infrastructure.

Timing varies by organization. Some strive for true “continuous deployment”, in which the new code flows seamlessly from developer commit through build, test, package, and deploy. Others put gates in between the developer and check-in to mainline, or source-to-build, or package-to-deploy so that some human governance remains in the toolchain. This document goes into more detail on these topics in the section on digital operations.

The Concept of “Release”

Release management, and the concept of a “release”, are among the most important and widely seen concepts in all forms of digital management. Regardless of working in a cutting-edge Agile startup with two people or one of the largest banks with a portfolio of thousands of applications, releases for coordination and communication are likely being used.

What is a “release?”. Betz defined it this way in other work: “A significant evolution in an IT service, often based on new systems development, coordinated with affected services and stakeholders”. Release management’s role is to “Coordinate the assembly of IT functionality into a coherent whole and deliver this package into a state in which the customer is getting the intended value” [31 p. 68, 31 p. 119].

Evidence of Notability

DevOps has not yet been fully recognized for its importance in academic guidance or peer-reviewed literature. Nevertheless, its influence is broad and notable. Significant publications include [92, 13, 136, 165, 166, 99]. Large international conferences (notably the DevOps Enterprise Summit, https://itrevolution.com/devops_events/) are dedicated to the event, as well as many smaller local events under the banner of "DevOpsDays" (https://www.devopsdays.org/).

Limitations

Like Agile, DevOps is primarily valuable in the development of new digital functionality. It has less relevance for organizations that choose to purchase digital functionality; e.g., as SaaS offerings. While it includes the fragment "Ops", it does not cover the full range of operational topics covered in the Operations Competency Area, such as help desk and field services.

Related Topics

APIs, Microservices, and Cloud-Native

This document has now covered modern infrastructure, including container-based infrastructure available via Cloud providers, and application development from waterfall, through Agile, and on to DevOps. The industry term for the culmination of all of these trends is "cloud-native". The Cloud Native Computing Foundation (CNCF) defines "cloud-native" as follows:

While this document does not cover specific programming languages or techniques, there are leading practices for building modern applications that are notable and should be understood by all Digital Practitioners. In software construction a programming language and execution environment must be chosen, but this choice is only the start. Innumerable design choices are required in structuring software, and the quality of these choices will affect the software’s ultimate success and value.

Early computer programs tended to be "monolithic"; that is, they were often built as one massive set of logic and their internal structure might be very complex and hard to understand. (In fact, considerable research has been performed on the limitations of human comprehension when faced with software systems of high complexity.) Monolithic programs also did not lend themselves to re-use, and therefore the same logic might need to be written over and over again. The use of "functions" and re-usable "libraries" became commonplace, so that developers were not continuously rewriting the same thing.

Two of the most critical concepts in software design are coupling and cohesion. In one of the earliest discussions of coupling, Ed Yourdon states:

"Coupling [is] the probability that in coding, debugging, or modifying one module, a programmer will have to take into account something about another module. If two modules are highly coupled, then there is a high probability that a programmer trying to modify one of them will have to make a change to the other. Clearly, total systems cost will be strongly influenced by the degree of coupling between modules." [312]

This is not merely a technical concern; as Yourdon implies, highly-coupled designs will result in higher system costs.

Cohesion is the opposite idea: that a given module should do one thing and do it well. Software engineers have been taught to develop highly-cohesive, loosely-coupled systems since at least the early 1970s, and these ideas continue to underlie the most modern trends in digital systems delivery. The latest evolution is the concept of cloud-native systems, which achieve these ideals through APIs, microservices, and container-based infrastructure.

Application Programming Interfaces

Three smaller software modules may be able to do the job of one monolithic program; however, those three modules must then communicate in some form. There are a variety of ways that this can occur; for example, communication may be via a shared data store. The preferred approach, however, is that communication occur over APIs.

An API is the public entry point in and out of a softwre component. It can be understood as a sort of contract; it represents an expectation that if you send a message or signal in a precisely specified format to the API, you will receive a specific, expected response. For example, your online banking service can be seen as having an API. You send it your name, password, and an indication that you want your account balance, and it will return your account balance. In pseudocode, the API might look like:

GetAccountBalance(user_name, password, account_number) returns amount

The modern digital world runs on APIs; they are pervasive throughout digital interactions. They operate at different levels of the digital stack; your bank balance request might be transmitted by HTTP, which is a lower-level set of APIs for all web traffic. At scale, APIs present a challenge of management: how do you cope with thousands of APIs? Mechanisms must be created for standardizing, inventorying, reporting on status, and many other concerns.

Microservices

APIs can be accessed in various ways. For example, a developer might incorporate a "library" in a program she is writing. The library (for example, one that supports trigonometric functions) has a set of APIs, that are only available if the library is compiled into the developer’s program and is only accessible if the program itself is running. Also, in this case, the API is dependent on the programming language; in general, a C++ library will not work in Java.

Increasingly, however, with the rise of the Internet, programs are continually "up" and running, and available to any other program that can communicate over the Internet, or an organization’s internal network. Programs that are continually run in this fashion, with attention to their availability and performance, are called "services". In some cases, a program or service may only be available as a visual web page. While this is still an API of a sort, many other services are available as direct API access; no web browser is required. Rather, services are accessed through protocols such as REST over HTTP. In this manner, a program written in Java can easily communicate with one written in C++. This idea is not new; many organizations started moving towards Service-Oriented Architecture (SOA) in the late 1990s and early 2000s. More recently, discussions of SOA have largely been replaced by attention to microservices.

Sam Newman, in Building Microservices, provides the following definition: "Microservices are small, autonomous services that work together" [208]. Breaking this down:

-

"Small" is a relative term

Newman endorses an heuristic that it should be possible to rewrite the microservice in two weeks. Matthew Skelton and Manuel Pais in Team Topologies [262] emphasize that optimally-sized teams have an upper bound to their "cognitive capacity"; this is also a pragmatic limit on the size of an effective microservice.

-

"Autonomous" means "loosely coupled" as discussed above, both in terms of the developer’s perspective as well as the operational perspective

Each microservice runs independently, typically on its own virtual machines or containers.

Newman observes that microservices provide the following benefits:

-

Technology flexibility: as noted above, microservices may be written in any language and yet communicate over common protocols and be managed in a common framework

-

Resilience: failure of one microservice should not result in failure of an entire digital product

-

Scalability: monolithic applications typically must be scaled as one unit; with microservices, just those units under higher load can have additional capacity allocated

-

Ease of deployment: because microservices are small and loosely coupled, change is less risky; see The DevOps Consensus as Systems Thinking

-

Organizational alignment: large, monolithic codebases often encounter issues with unclear ownership; microservices are typically each owned by one team, although this is not a hard and fast rule

-

Composability: microservices can be combined and re-combined ("mashed up") in endless variations, both within and across organizational boundaries; an excellent example of this is Google Maps, which is widely used by many other vendors (e.g. Airbnb™, Lyft™) when location information is needed

-

Replaceability: because they are loosely coupled and defined by their APIs, a microservice can be replaced without replacing the rest of a broader system; for example, a microservice written in Java can be replaced by one written in Go, as long as the APIs remain identical

The Twelve-Factor App

A number of good practices are associated with microservices success. One notable representation of this broader set of concerns is known as the "twelve-factor app" (see https://12factor.net/). To quote [302]:

Excerpts from the Twelve-Factor App Website

-

Codebase

One codebase tracked in revision control, many deploys

A copy of the revision tracking database is known as a code repository, often shortened to code repo or just repo … A codebase is any single repo (in a centralized revision control system like Subversion), or any set of repos who share a root commit (in a decentralized revision control system like Git). Twelve-factor principles imply continuous integration.

-

Dependencies

Explicitly declare and isolate dependencies

A twelve-factor app never relies on implicit existence of system-wide packages. It declares all dependencies, completely and exactly, via a dependency declaration manifest. Furthermore, it uses a dependency isolation tool during execution to ensure that no implicit dependencies “leak in” from the surrounding system. The full and explicit dependency specification is applied uniformly to both production and development.

-

Configuration management

Store config in the environment

An app’s config is everything that is likely to vary between deploys (staging, production, developer environments, etc.). Apps sometimes store config as constants in the code. This is a violation of twelve-factor, which requires strict separation of config from code. Config varies substantially across deploys, code does not. Typical configuration values include server or hostnames, database names and locations, and (critically) authentication and authorization information (e.g., usernames and passwords, or private/public keys).

-

Backing services

Treat backing services as attached resources

A backing service [aka a resource] is any service the app consumes over the network as part of its normal operation. Examples include data stores (such as MySQL or CouchDB®), messaging/queueing systems (such as RabbitMQ® or Beanstalkd), SMTP services for outbound email (such as Postfix), and caching systems (such as Memcached).

In addition to these locally-managed services, the app may also have services provided and managed by third parties. The code for a twelve-factor app makes no distinction between local and third-party services. To the app, both are attached resources, accessed via a URL or other locator/credentials stored in the config. A deploy of the twelve-factor app should be able to swap out a local MySQL database with one managed by a third party - such as Amazon Relational Database Service (Amazon RDS) - without any changes to the app’s code … only the resource handle in the config needs to change.

-

Build, release, run

Strictly separate build and run stages

A codebase is transformed into a (non-development) deploy through three [strictly separated] stages: The build stage is a transform which converts a code repo into an executable bundle known as a build. Using a version of the code at a commit specified by the deployment process, the build stage fetches vendors' dependencies and compiles binaries and assets … The release stage takes the build produced by the build stage and combines it with the deploy’s current config. The resulting release contains both the build and the config and is ready for immediate execution in the execution environment … The run stage (also known as “runtime”) runs the app in the execution environment, by launching some set of the app’s processes against a selected release.

-

Processes

Execute the app as one or more stateless processes

Twelve-factor processes are stateless and share nothing. Any data that needs to persist must be stored in a stateful backing service, typically a database … The memory space or file system of the process can be used as a brief, single-transaction cache. For example, downloading a large file, operating on it, and storing the results of the operation in the database. The twelve-factor app never assumes that anything cached in memory or on disk will be available on a future request or job – with many processes of each type running, chances are high that a future request will be served by a different process.

-

Port binding

Export services via port binding

Web apps are sometimes executed inside a web server container. For example, PHP apps might run as a module inside Apache® HTTPD, or Java apps might run inside Tomcat … The twelve-factor app is completely self-contained and does not rely on runtime injection of a web server into the execution environment to create a web-facing service. The web app exports HTTP as a service by binding to a port, and listening to requests coming in on that port … In a local development environment, the developer visits a service URL like http://localhost:5000/ to access the service exported by their app. In deployment, a routing layer handles routing requests from a public-facing hostname to the port-bound web processes.

-

Concurrency

Scale out via the process model

Any computer program, once run, is represented by one or more processes. Web apps have taken a variety of process-execution forms. For example, PHP processes run as child processes of Apache, started on demand as needed by request volume. Java processes take the opposite approach, with the Java Virtual Machine (JVM) providing one massive [process] that reserves a large block of system resources (CPU and memory) on startup, with concurrency managed internally via threads. In both cases, the running process(es) are only minimally visible to the developers of the app … In the twelve-factor app, processes are a first-class citizen. Processes in the twelve-factor app take strong cues from the UNIX process model for running service daemons. Using this model, the developer can architect their app to handle diverse workloads by assigning each type of work to a process type. For example, HTTP requests may be handled by a web process, and long-running background tasks handled by a worker process.

-

Disposability

Maximize robustness with fast startup and graceful shutdown

The twelve-factor app’s processes are disposable, meaning they can be started or stopped at a moment’s notice. This facilitates fast elastic scaling, rapid deployment of code or config changes, and robustness of production deploys … Processes should strive to minimize startup time. Ideally, a process takes a few seconds from the time the launch command is executed until the process is up and ready to receive requests or jobs … Processes shut down gracefully when they receive a SIGTERM signal from the process manager. For a web process, graceful shutdown is achieved by ceasing to listen on the service port (thereby refusing any new requests), allowing any current requests to finish, and then exiting … For a worker process, graceful shutdown is achieved by returning the current job to the work queue … Processes should also be robust against sudden death … A twelve-factor app is architected to handle unexpected, non-graceful terminations.

-

Dev/prod parity

Keep development, staging, and production as similar as possible

Historically, there have been substantial gaps between development (a developer making live edits to a local deploy of the app) and production (a running deploy of the app accessed by end users). These gaps manifest in three areas … The time gap: A developer may work on code that takes days, weeks, or even months to go into production … The personnel gap: Developers write code, operations engineers deploy it … The tools gap: Developers may be using a stack like NGINX™, SQLite, and OS X, while the production deploy uses Apache, MySQL, and Linux … The twelve-factor app is designed for continuous deployment by keeping [these gaps] between development and production small.

Traditional app Twelve-factor app Time between deploys

Weeks

Hours

Code authors versus code deployers

Different people

Same people

Dev versus production environments

Divergent

As similar as possible

-

Logs

Logs are the stream of aggregated, time-ordered events collected from the output streams of all running processes and backing services. Logs in their raw form are typically a text format with one event per line (though backtraces from exceptions may span multiple lines). Logs have no fixed beginning or end, but flow continuously as long as the app is operating … A twelve-factor app never concerns itself with routing or storage of its output stream … Instead, each running process writes its event stream, unbuffered, to stdout. During local development, the developer will view this stream in the foreground of their terminal to observe the app’s behavior … In staging or production deploys, each process’ stream will be captured by the execution environment, collated together with all other streams from the app, and routed to one or more final destinations for viewing and long-term archival.

-

Admin processes

The process formation is the array of processes that are used to do the app’s regular business (such as handling web requests) as it runs. Separately, developers will often wish to do one-off administrative or maintenance tasks for the app, such as:

-

Running database migrations …

-

Running a console … to run arbitrary code or inspect the app’s models against the live database …

-

Running one-time scripts committed into the app’s repo …

One-off admin processes should be run in an identical environment as the regular long-running processes of the app. They run against a release, using the same codebase and config as any process run against that release. Admin code must ship with application code to avoid synchronization issues.

-

It is strongly recommended that the reader review the unabridged set of guidelines at 12Factor.net.

Evidence of Notability

Cloud-native approaches are at the time of publication receiving enormous industry attention. Kubecon (the leading conference of the CNCF) attracts wide interest, and all major cloud providers and many smaller firms participate in the ecosystem. All major cloud providers and scores of smaller firms participate in the CNCF ecosystem.

Limitations

Trillions of dollars of IT investment have been made in older architectures: bare-metal computing, tightly-coupled systems, stateful applications, and every imaginable permutation of not following guidance such as the twelve-factor methodology. The Digital Practitioner should be prepared to encounter this messy real world.

Related Topics

Securing Applications and Digital Products

Description

Application security includes a broad range of specialized areas, including secure software design and development, threat modeling, vulnerability assessment, penetration testing, and the impact of security on DevOps (and vice versa). As with other aspects of security, the move to cloud computing brings some changes to application security. The CSA guidance on cloud security specifically addresses application security considerations in cloud environments in domain 10 of their latest cloud security guidance.

An important element of application security is Secure Software Development Lifecycle (SSDLC), an approach toward developing software in a secure manner. Numerous frameworks and resources are available to follow, including from Microsoft (Security Development Lifecycle), NIST (NIST SP 800-64 Rev. 2), ISO/IEC (ISO/IEC 27034-1:2011), and the Open Web Application Security Project (OWASP Top Ten). In addition, information resources available from MITRE including Common Weaknesses Enumeration (CWE) and Common Vulnerability and Exposures (CVE) are helpful to development teams striving to develop secure code.

A basic approach to secure design and development will include these phases: Training – Define – Design – Develop – Test.[2] A component of an SSDLC is threat modeling. Good resources on threat modeling are available from Microsoft and from The Open Group.

It is worth noting that the move to cloud computing affects all aspects of an SSDLC, because cloud services abstract various computing resources, and there are automation approaches used in cloud services that fundamentally change the ways in which software is developed, tested, and deployed in cloud services versus in on-premises computing. In addition, there are significant differences in the degree of visibility and control that is provided to the customer, including availability of system logs at various points in the computing stack.

Application security will also include secure deployment, encompassing code review, unit, regression, and functional testing, and static and dynamic application security testing.

Other key aspects of application security include vulnerability assessment and penetration testing. Both have differences in cloud versus on-premises, as a customer’s ability to perform vulnerability scans and penetration tests may be restricted by contract by the CSP, and there may be technical issues relating to the type of cloud service, single versus multi-tenancy of the application, and so on.

Evidence of Notability

To be added in a future version.

Limitations

To be added in a future version.

Related Topics

-

Open Enterprise Security Architecture (O-ESA) (The Open Group)

-

Security Considerations in the System Development Life Cycle (NIST)

-

Security Guidance for Critical Areas of Focus in Cloud Computing (CSA)

-

Application Security - Part 1: Overview and Concepts, ISO/IEC27034-1:2011 (ISO/IEC)

-

https://cwe.mitre.org/, Common Weaknesses Enumeration, Common Vulnerability, and Exposures (MITRE)